On Cold Posteriors of Probabilistic Neural Networks: Understanding the Cold Posterior Effect and A New Way to Learn Cold Posteriors with Tight Generalization Guarantees

Paper and Code

Oct 20, 2024

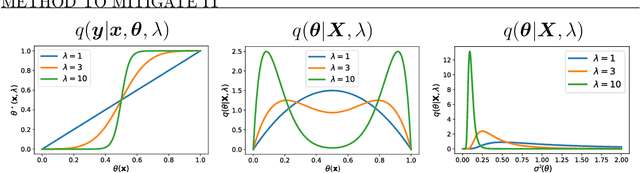

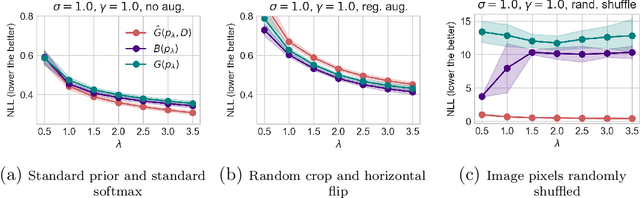

Bayesian inference provides a principled probabilistic framework for quantifying uncertainty by updating beliefs based on prior knowledge and observed data through Bayes' theorem. In Bayesian deep learning, neural network weights are treated as random variables with prior distributions, allowing for a probabilistic interpretation and quantification of predictive uncertainty. However, Bayesian methods lack theoretical generalization guarantees for unseen data. PAC-Bayesian analysis addresses this limitation by offering a frequentist framework to derive generalization bounds for randomized predictors, thereby certifying the reliability of Bayesian methods in machine learning. Temperature $T$, or inverse-temperature $\lambda = \frac{1}{T}$, originally from statistical mechanics in physics, naturally arises in various areas of statistical inference, including Bayesian inference and PAC-Bayesian analysis. In Bayesian inference, when $T < 1$ (``cold'' posteriors), the likelihood is up-weighted, resulting in a sharper posterior distribution. Conversely, when $T > 1$ (``warm'' posteriors), the likelihood is down-weighted, leading to a more diffuse posterior distribution. By balancing the influence of observed data and prior regularization, temperature adjustments can address issues of underfitting or overfitting in Bayesian models, bringing improved predictive performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge