Network Embedding: on Compression and Learning

Paper and Code

Jul 08, 2019

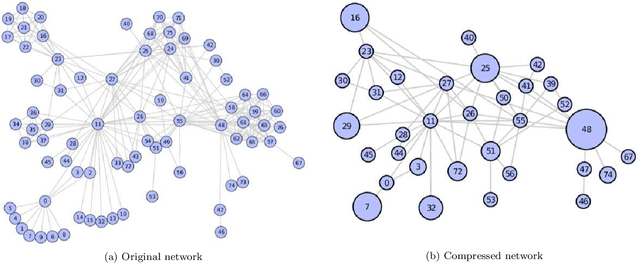

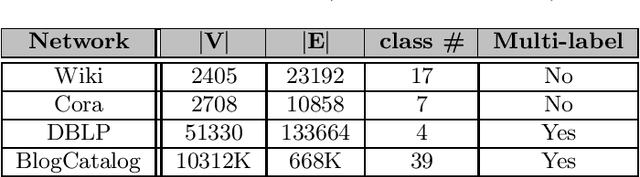

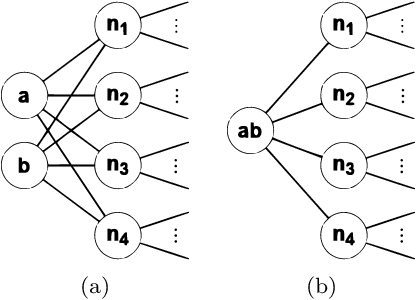

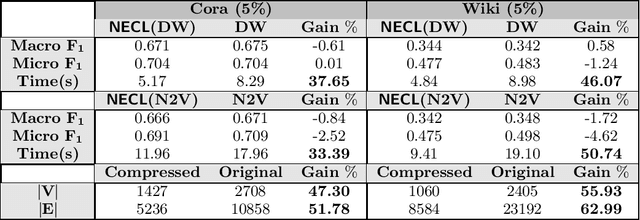

Recently, network embedding that encodes structural information of graphs into a vector space has become popular for network analysis. Although recent methods show promising performance for various applications, the huge sizes of graphs may hinder a direct application of existing network embedding method to them. This paper presents NECL, a novel efficient Network Embedding method with two goals. 1) Is there an ideal Compression of a network? 2) Will the compression of a network significantly boost the representation Learning of the network? For the first problem, we propose a neighborhood similarity based graph compression method that compresses the input graph to get a smaller graph without losing any/much information about the global structure of the graph and the local proximity of the vertices in the graph. For the second problem, we use the compressed graph for network embedding instead of the original large graph to bring down the embedding cost. NECL is a general meta-strategy to improve the efficiency of all of the state-of-the-art graph embedding algorithms based on random walks, including DeepWalk and Node2vec, without losing their effectiveness. Extensive experiments on large real-world networks validate the efficiency of NECL method that yields an average improvement of 23 - 57% embedding time, including walking and learning time without decreasing classification accuracy as evaluated on single and multi-label classification tasks on real-world graphs such as DBLP, BlogCatalog, Cora and Wiki.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge