Natural and Adversarial Error Detection using Invariance to Image Transformations

Paper and Code

Feb 01, 2019

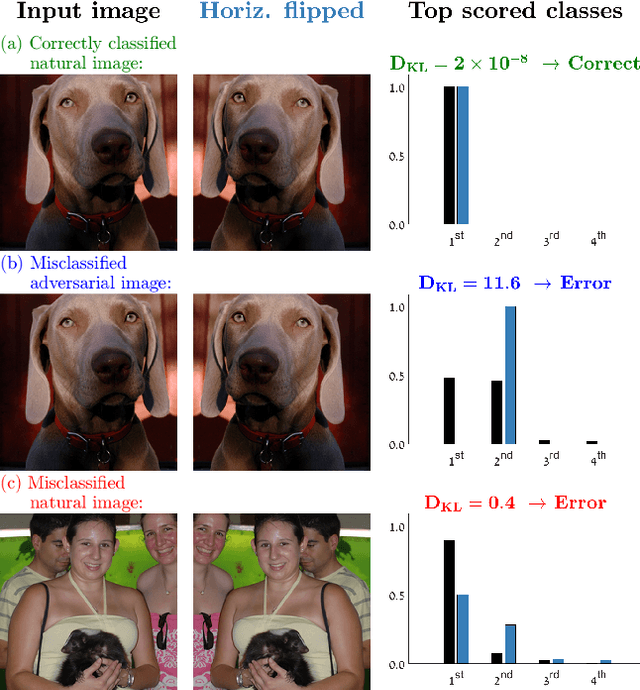

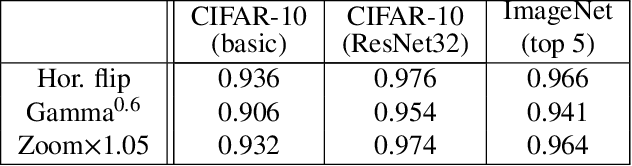

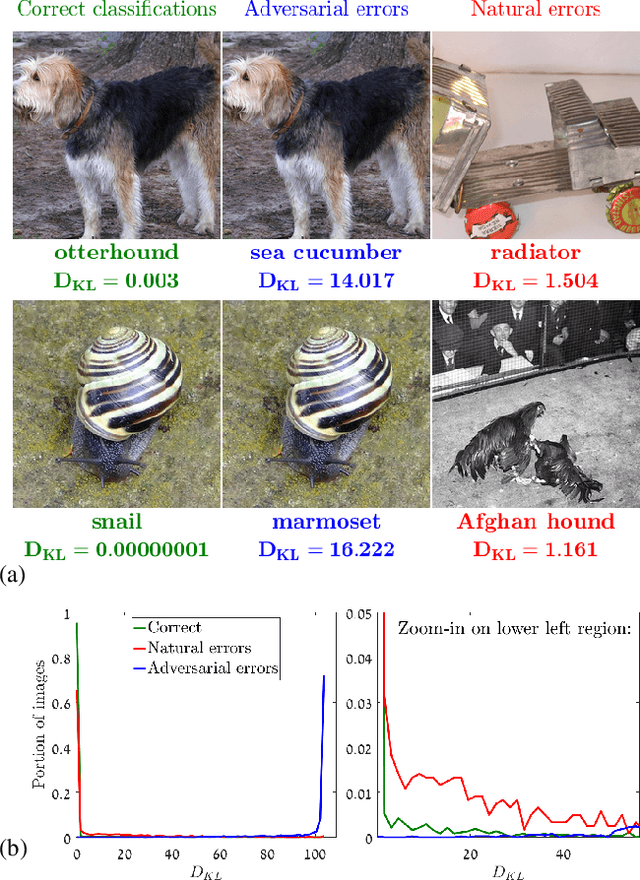

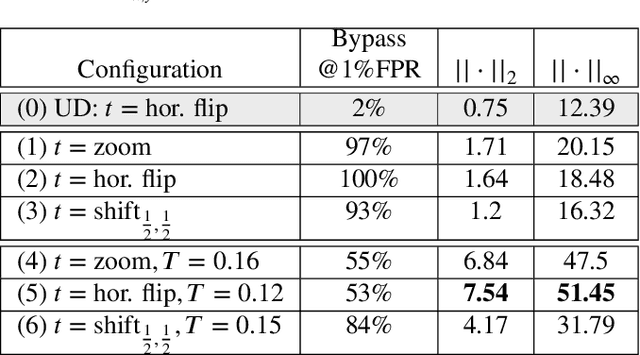

We propose an approach to distinguish between correct and incorrect image classifications. Our approach can detect misclassifications which either occur $\it{unintentionally}$ ("natural errors"), or due to $\it{intentional~adversarial~attacks}$ ("adversarial errors"), both in a single $\it{unified~framework}$. Our approach is based on the observation that correctly classified images tend to exhibit robust and consistent classifications under certain image transformations (e.g., horizontal flip, small image translation, etc.). In contrast, incorrectly classified images (whether due to adversarial errors or natural errors) tend to exhibit large variations in classification results under such transformations. Our approach does not require any modifications or retraining of the classifier, hence can be applied to any pre-trained classifier. We further use state of the art targeted adversarial attacks to demonstrate that even when the adversary has full knowledge of our method, the adversarial distortion needed for bypassing our detector is $\it{no~longer~imperceptible~to~the~human~eye}$. Our approach obtains state-of-the-art results compared to previous adversarial detection methods, surpassing them by a large margin.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge