My House, My Rules: Learning Tidying Preferences with Graph Neural Networks

Paper and Code

Nov 04, 2021

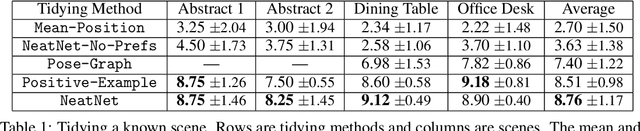

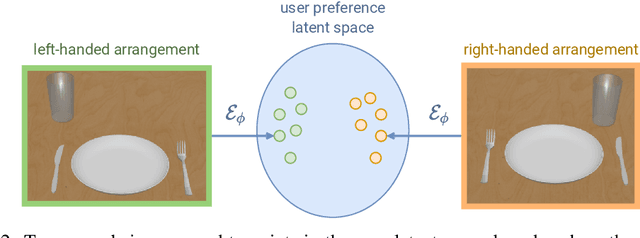

Robots that arrange household objects should do so according to the user's preferences, which are inherently subjective and difficult to model. We present NeatNet: a novel Variational Autoencoder architecture using Graph Neural Network layers, which can extract a low-dimensional latent preference vector from a user by observing how they arrange scenes. Given any set of objects, this vector can then be used to generate an arrangement which is tailored to that user's spatial preferences, with word embeddings used for generalisation to new objects. We develop a tidying simulator to gather rearrangement examples from 75 users, and demonstrate empirically that our method consistently produces neat and personalised arrangements across a variety of rearrangement scenarios.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge