Multi-Scale Label Relation Learning for Multi-Label Classification Using 1-Dimensional Convolutional Neural Networks

Paper and Code

Jul 13, 2021

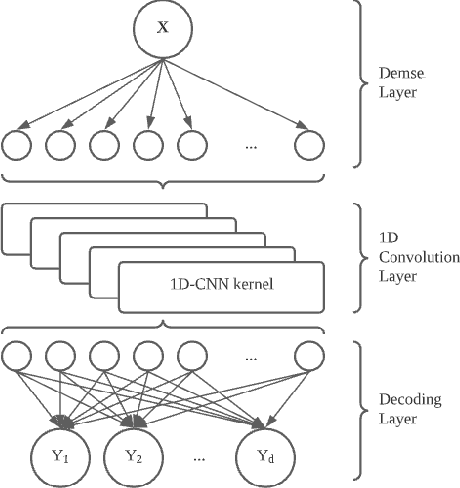

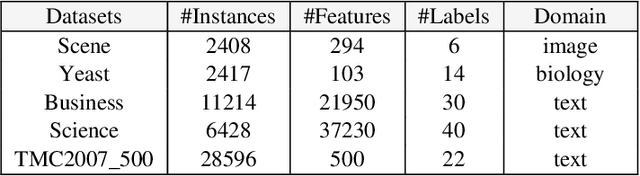

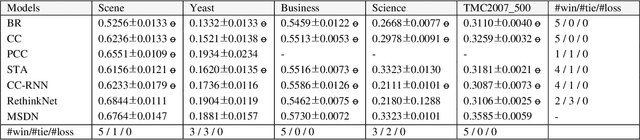

We present Multi-Scale Label Dependence Relation Networks (MSDN), a novel approach to multi-label classification (MLC) using 1-dimensional convolution kernels to learn label dependencies at multi-scale. Modern multi-label classifiers have been adopting recurrent neural networks (RNNs) as a memory structure to capture and exploit label dependency relations. The RNN-based MLC models however tend to introduce a very large number of parameters that may cause under-/over-fitting problems. The proposed method uses the 1-dimensional convolutional neural network (1D-CNN) to serve the same purpose in a more efficient manner. By training a model with multiple kernel sizes, the method is able to learn the dependency relations among labels at multiple scales, while it uses a drastically smaller number of parameters. With public benchmark datasets, we demonstrate that our model can achieve better accuracies with much smaller number of model parameters compared to RNN-based MLC models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge