Multi-Person Pose Estimation with Enhanced Feature Aggregation and Selection

Paper and Code

Mar 20, 2020

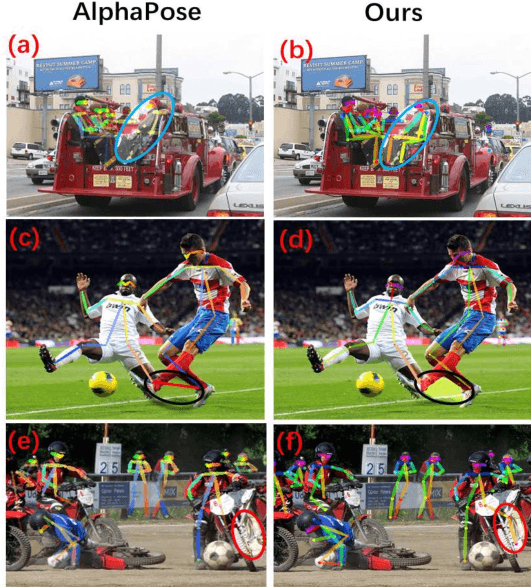

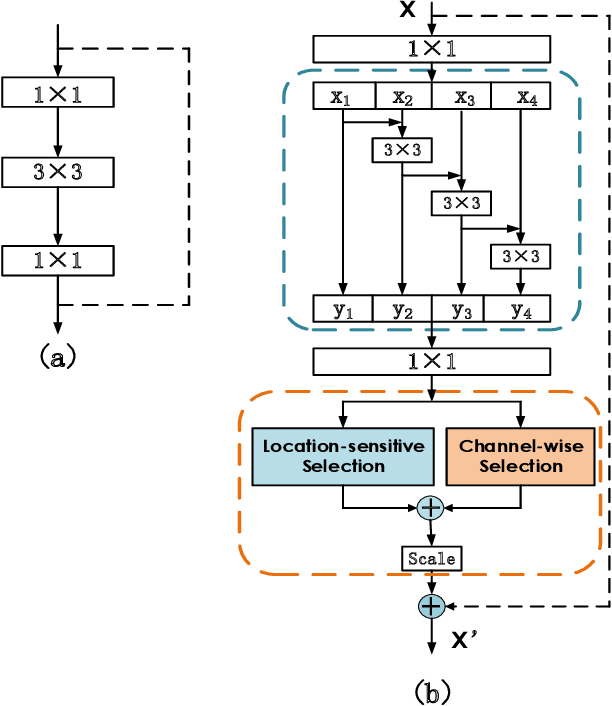

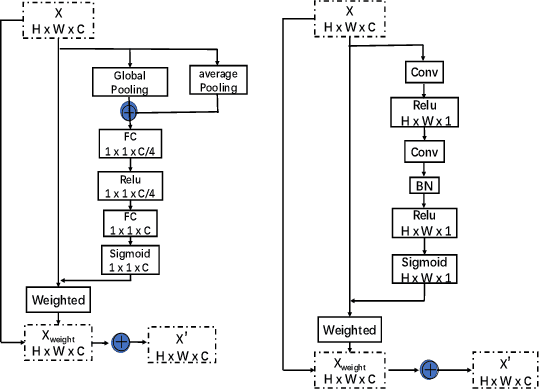

We propose a novel Enhanced Feature Aggregation and Selection network (EFASNet) for multi-person 2D human pose estimation. Due to enhanced feature representation, our method can well handle crowded, cluttered and occluded scenes. More specifically, a Feature Aggregation and Selection Module (FASM), which constructs hierarchical multi-scale feature aggregation and makes the aggregated features discriminative, is proposed to get more accurate fine-grained representation, leading to more precise joint locations. Then, we perform a simple Feature Fusion (FF) strategy which effectively fuses high-resolution spatial features and low-resolution semantic features to obtain more reliable context information for well-estimated joints. Finally, we build a Dense Upsampling Convolution (DUC) module to generate more precise prediction, which can recover missing joint details that are usually unavailable in common upsampling process. As a result, the predicted keypoint heatmaps are more accurate. Comprehensive experiments demonstrate that the proposed approach outperforms the state-of-the-art methods and achieves the superior performance over three benchmark datasets: the recent big dataset CrowdPose, the COCO keypoint detection dataset and the MPII Human Pose dataset. Our code will be released upon acceptance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge