Modified Double DQN: addressing stability

Paper and Code

Aug 09, 2021

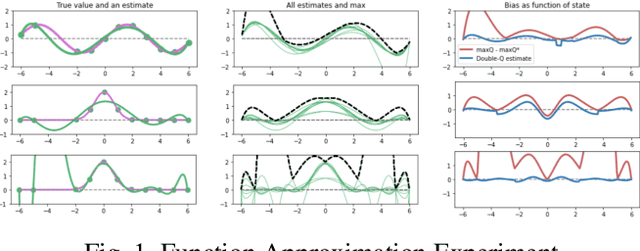

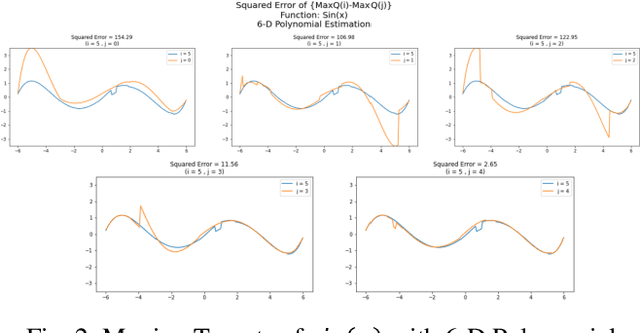

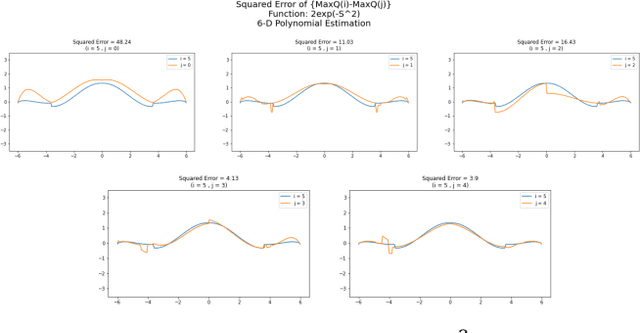

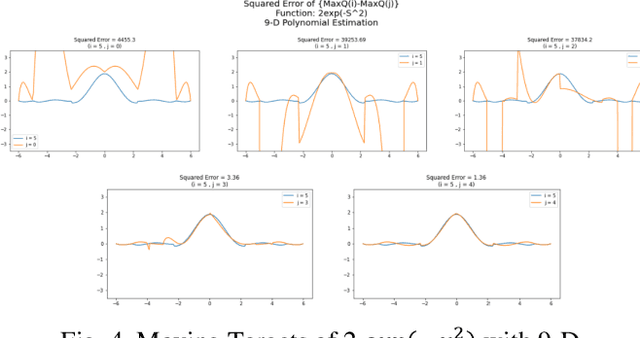

Inspired by double q learning algorithm, the double DQN algorithm was originally proposed in order to address the overestimation issue in the original DQN algorithm. The double DQN has successfully shown both theoretically and empirically the importance of decoupling in terms of action evaluation and selection in computation of targets values; although, all the benefits were acquired with only a simple adaption to DQN algorithm, minimal possible change as it was mentioned by the authors. Nevertheless, there seems a roll-back in the proposed algorithm of Double-DQN since the parameters of policy network are emerged again in the target value function which were initially withdrawn by DQN with the hope of tackling the serious issue of moving targets and the instability caused by it (i.e., by moving targets) in the process of learning. Therefore, in this paper three modifications to the Double-DQN algorithm are proposed with the hope of maintaining the performance in the terms of both stability and overestimation. These modifications are focused on the logic of decoupling the best action selection and evaluation in the target value function and the logic of tackling the moving targets issue. Each of these modifications have their own pros and cons compared to the others. The mentioned pros and cons mainly refer to the execution time required for the corresponding algorithm and the stability provided by the corresponding algorithm. Also, in terms of overestimation, none of the modifications seem to underperform compared to the original Double-DQN if not outperform it. With the intention of evaluating the efficacy of the proposed modifications, multiple empirical experiments along with theoretical experiments were conducted. The results obtained are represented and discussed in this article.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge