Mix and Mask Actor-Critic Methods

Paper and Code

Jun 24, 2021

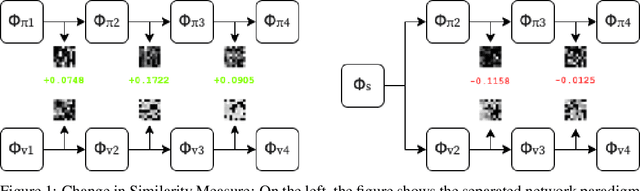

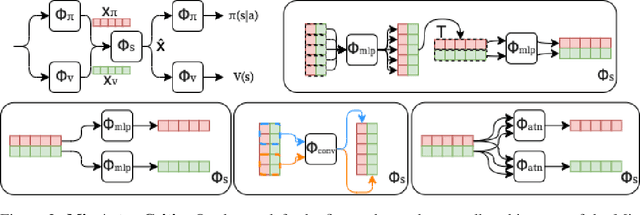

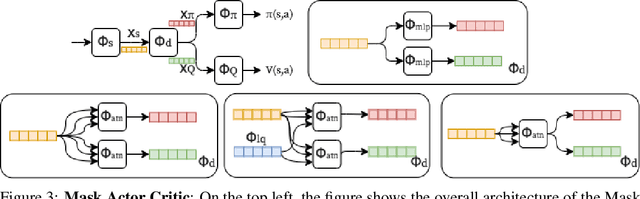

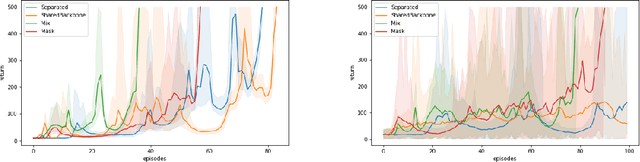

Shared feature spaces for actor-critic methods aims to capture generalized latent representations to be used by the policy and value function with the hopes for a more stable and sample-efficient optimization. However, such a paradigm present a number of challenges in practice, as parameters generating a shared representation must learn off two distinct objectives, resulting in competing updates and learning perturbations. In this paper, we present a novel feature-sharing framework to address these difficulties by introducing the mix and mask mechanisms and the distributional scalarization technique. These mechanisms behaves dynamically to couple and decouple connected latent features variably between the policy and value function, while the distributional scalarization standardizes the two objectives using a probabilistic standpoint. From our experimental results, we demonstrate significant performance improvements compared to alternative methods using separate networks and networks with a shared backbone.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge