Meta-TTT: A Meta-learning Minimax Framework For Test-Time Training

Paper and Code

Oct 02, 2024

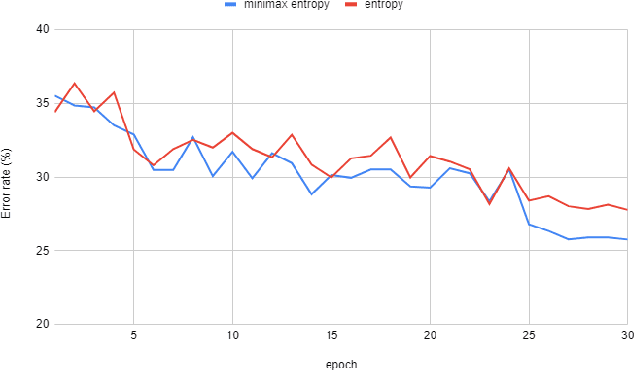

Test-time domain adaptation is a challenging task that aims to adapt a pre-trained model to limited, unlabeled target data during inference. Current methods that rely on self-supervision and entropy minimization underperform when the self-supervised learning (SSL) task does not align well with the primary objective. Additionally, minimizing entropy can lead to suboptimal solutions when there is limited diversity within minibatches. This paper introduces a meta-learning minimax framework for test-time training on batch normalization (BN) layers, ensuring that the SSL task aligns with the primary task while addressing minibatch overfitting. We adopt a mixed-BN approach that interpolates current test batch statistics with the statistics from source domains and propose a stochastic domain synthesizing method to improve model generalization and robustness to domain shifts. Extensive experiments demonstrate that our method surpasses state-of-the-art techniques across various domain adaptation and generalization benchmarks, significantly enhancing the pre-trained model's robustness on unseen domains.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge