Meta-Cal: Well-controlled Post-hoc Calibration by Ranking

Paper and Code

May 10, 2021

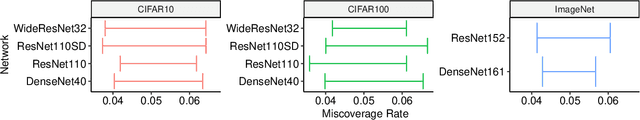

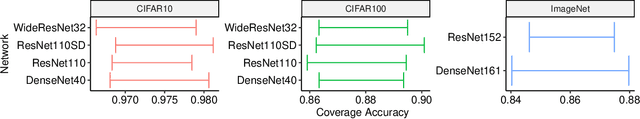

In many applications, it is desirable that a classifier not only makes accurate predictions, but also outputs calibrated probabilities. However, many existing classifiers, especially deep neural network classifiers, tend not to be calibrated. Post-hoc calibration is a technique to recalibrate a model, and its goal is to learn a calibration map. Existing approaches mostly focus on constructing calibration maps with low calibration errors. Contrary to these methods, we study post-hoc calibration for multi-class classification under constraints, as a calibrator with a low calibration error does not necessarily mean it is useful in practice. In this paper, we introduce two practical constraints to be taken into consideration. We then present Meta-Cal, which is built from a base calibrator and a ranking model. Under some mild assumptions, two high-probability bounds are given with respect to these constraints. Empirical results on CIFAR-10, CIFAR-100 and ImageNet and a range of popular network architectures show our proposed method significantly outperforms the current state of the art for post-hoc multi-class classification calibration.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge