MEG: Generating Molecular Counterfactual Explanations for Deep Graph Networks

Paper and Code

Apr 16, 2021

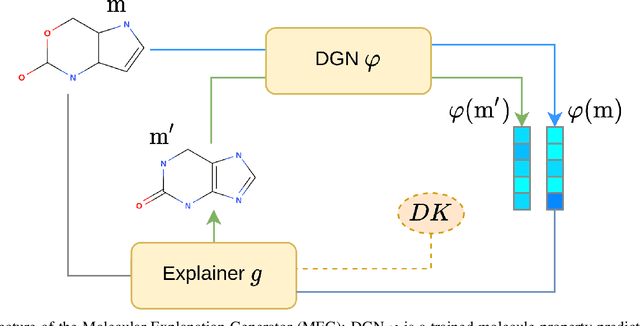

Explainable AI (XAI) is a research area whose objective is to increase trustworthiness and to enlighten the hidden mechanism of opaque machine learning techniques. This becomes increasingly important in case such models are applied to the chemistry domain, for its potential impact on humans' health, e.g, toxicity analysis in pharmacology. In this paper, we present a novel approach to tackle explainability of deep graph networks in the context of molecule property prediction t asks, named MEG (Molecular Explanation Generator). We generate informative counterfactual explanations for a specific prediction under the form of (valid) compounds with high structural similarity and different predicted properties. Given a trained DGN, we train a reinforcement learning based generator to output counterfactual explanations. At each step, MEG feeds the current candidate counterfactual into the DGN, collects the prediction and uses it to reward the RL agent to guide the exploration. Furthermore, we restrict the action space of the agent in order to only keep actions that maintain the molecule in a valid state. We discuss the results showing how the model can convey non-ML experts with key insights into the learning model focus in the neighbourhood of a molecule.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge