m-RevNet: Deep Reversible Neural Networks with Momentum

Paper and Code

Aug 16, 2021

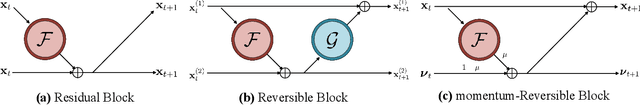

In recent years, the connections between deep residual networks and first-order Ordinary Differential Equations (ODEs) have been disclosed. In this work, we further bridge the deep neural architecture design with the second-order ODEs and propose a novel reversible neural network, termed as m-RevNet, that is characterized by inserting momentum update to residual blocks. The reversible property allows us to perform backward pass without access to activation values of the forward pass, greatly relieving the storage burden during training. Furthermore, the theoretical foundation based on second-order ODEs grants m-RevNet with stronger representational power than vanilla residual networks, which potentially explains its performance gains. For certain learning scenarios, we analytically and empirically reveal that our m-RevNet succeeds while standard ResNet fails. Comprehensive experiments on various image classification and semantic segmentation benchmarks demonstrate the superiority of our m-RevNet over ResNet, concerning both memory efficiency and recognition performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge