LucidDream: Controlled Temporally-Consistent DeepDream on Videos

Paper and Code

Nov 27, 2019

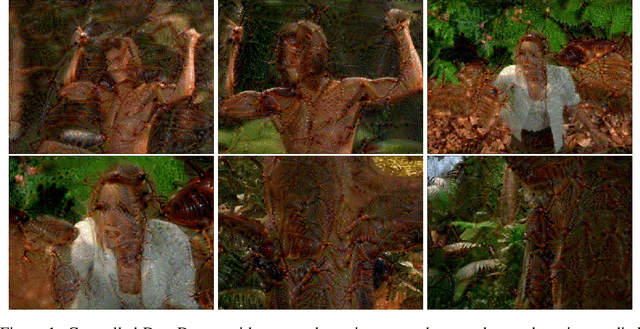

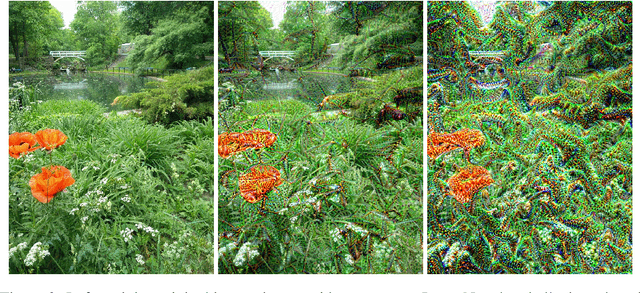

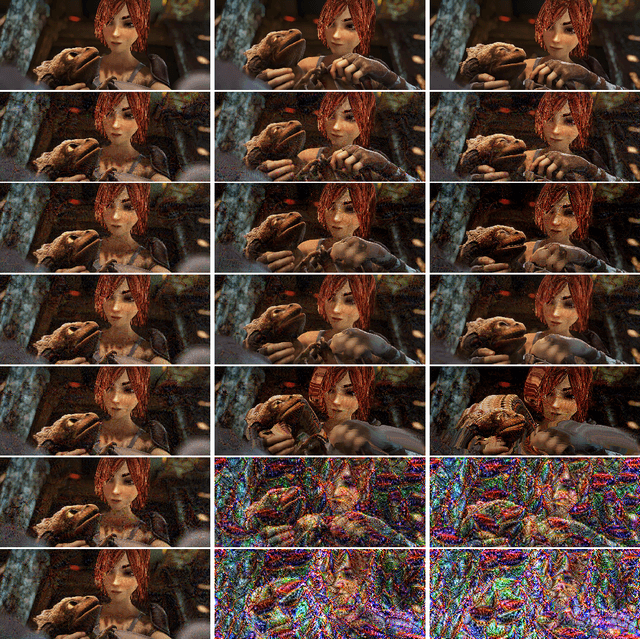

In this work, we aim to propose a set of techniques to improve the controllability and aesthetic appeal when DeepDream, which uses a pre-trained neural network to modify images by hallucinating objects into them, is applied to videos. In particular, we demonstrate a simple modification that improves control over the class of object that DeepDream is induced to hallucinate. We also show that the flickering artifacts which frequently appear when DeepDream is applied on videos can be mitigated by the use of an additional temporal consistency loss term.

* Workshop on Machine Learning for Creativity and Design, NeurIPS 2019

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge