Low-Rank Factorization for Rank Minimization with Nonconvex Regularizers

Paper and Code

Jun 13, 2020

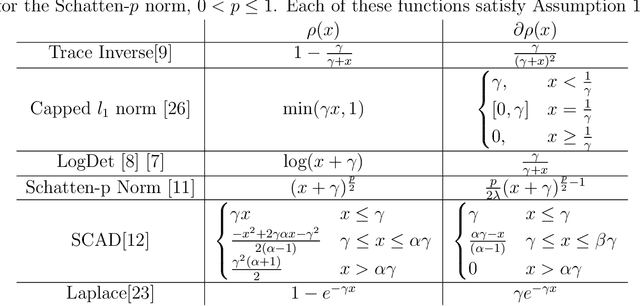

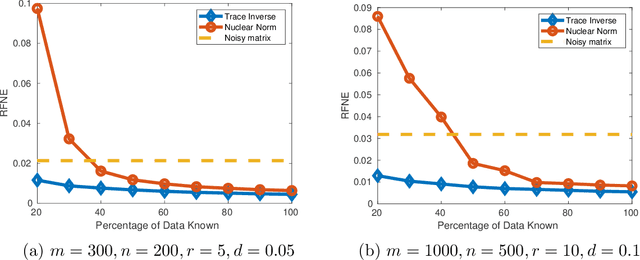

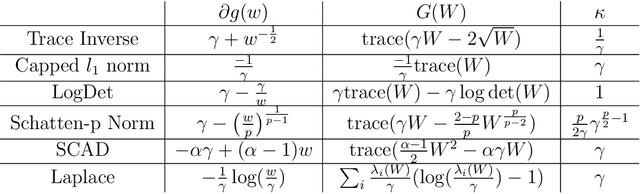

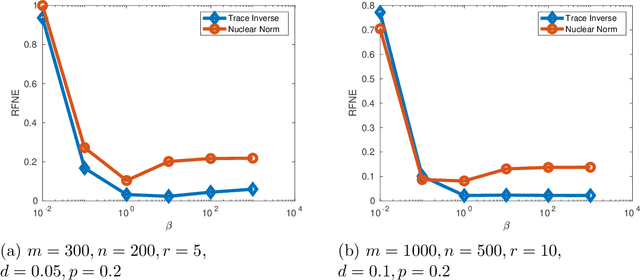

Rank minimization is of interest in machine learning applications such as recommender systems and robust principal component analysis. Minimizing the convex relaxation to the rank minimization problem, the nuclear norm, is an effective technique to solve the problem with strong performance guarantees. However, nonconvex relaxations have less estimation bias than the nuclear norm and can more accurately reduce the effect of noise on the measurements. We develop efficient algorithms based on iteratively reweighted nuclear norm schemes, while also utilizing the low rank factorization for semidefinite programs put forth by Burer and Monteiro. We prove convergence and computationally show the advantages over convex relaxations and alternating minimization methods. Additionally, the computational complexity of each iteration of our algorithm is on par with other state of the art algorithms, allowing us to quickly find solutions to the rank minimization problem for large matrices.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge