Long-Time Convergence and Propagation of Chaos for Nonlinear MCMC

Paper and Code

Feb 11, 2022

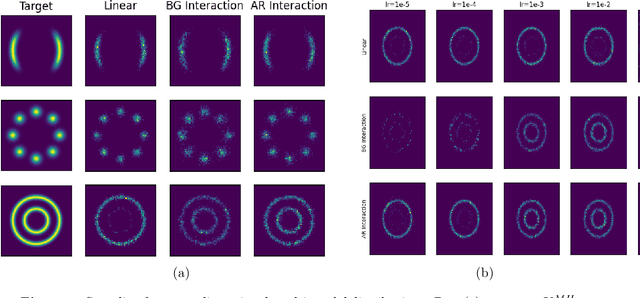

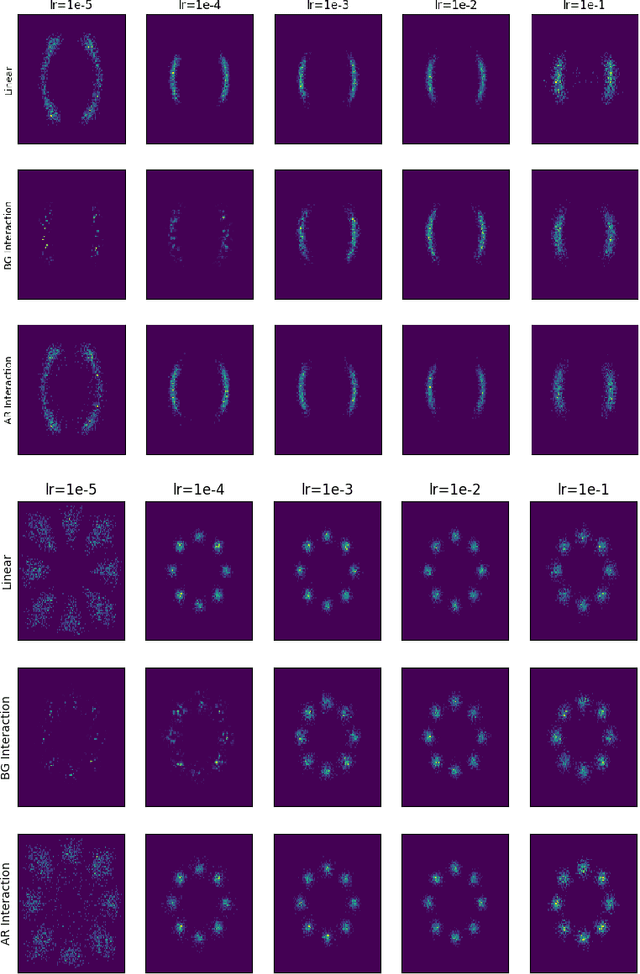

In this paper, we study the long-time convergence and uniform strong propagation of chaos for a class of nonlinear Markov chains for Markov chain Monte Carlo (MCMC). Our technique is quite simple, making use of recent contraction estimates for linear Markov kernels and basic techniques from Markov theory and analysis. Moreover, the same proof strategy applies to both the long-time convergence and propagation of chaos. We also show, via some experiments, that these nonlinear MCMC techniques are viable for use in real-world high-dimensional inference such as Bayesian neural networks.

* 18+12 pages, 2 figures

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge