Leveraging Untrustworthy Commands for Multi-Robot Coordination in Unpredictable Environments: A Bandit Submodular Maximization Approach

Paper and Code

Sep 28, 2023

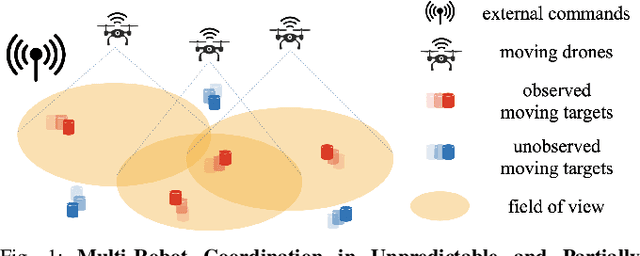

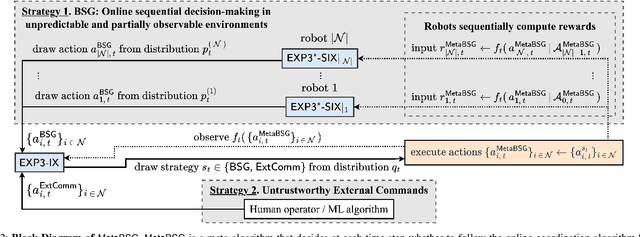

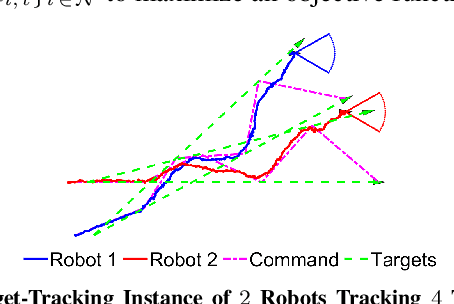

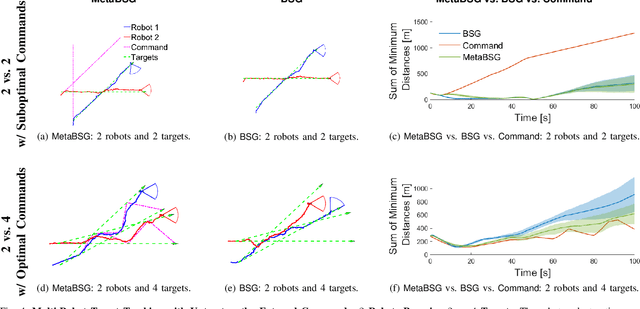

We study the problem of multi-agent coordination in unpredictable and partially-observable environments with untrustworthy external commands. The commands are actions suggested to the robots, and are untrustworthy in that their performance guarantees, if any, are unknown. Such commands may be generated by human operators or machine learning algorithms and, although untrustworthy, can often increase the robots' performance in complex multi-robot tasks. We are motivated by complex multi-robot tasks such as target tracking, environmental mapping, and area monitoring. Such tasks are often modeled as submodular maximization problems due to the information overlap among the robots. We provide an algorithm, Meta Bandit Sequential Greedy (MetaBSG), which enjoys performance guarantees even when the external commands are arbitrarily bad. MetaBSG leverages a meta-algorithm to learn whether the robots should follow the commands or a recently developed submodular coordination algorithm, Bandit Sequential Greedy (BSG) [1], which has performance guarantees even in unpredictable and partially-observable environments. Particularly, MetaBSG asymptotically can achieve the better performance out of the commands and the BSG algorithm, quantifying its suboptimality against the optimal time-varying multi-robot actions in hindsight. Thus, MetaBSG can be interpreted as robustifying the untrustworthy commands. We validate our algorithm in simulated scenarios of multi-target tracking.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge