Leveraging Transformers for StarCraft Macromanagement Prediction

Paper and Code

Oct 11, 2021

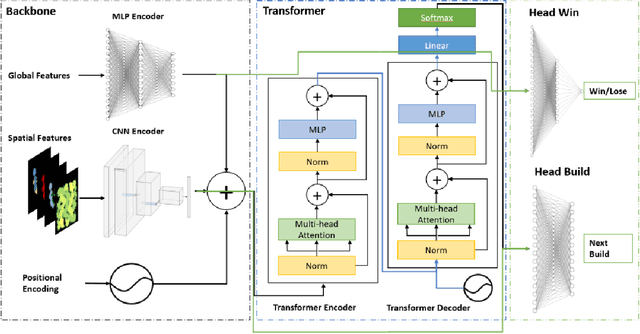

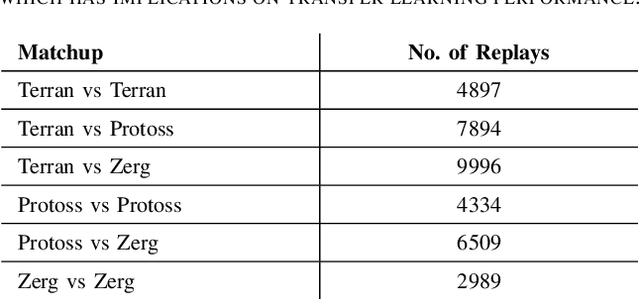

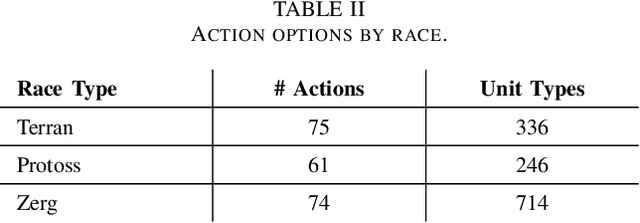

Inspired by the recent success of transformers in natural language processing and computer vision applications, we introduce a transformer-based neural architecture for two key StarCraft II (SC2) macromanagement tasks: global state and build order prediction. Unlike recurrent neural networks which suffer from a recency bias, transformers are able to capture patterns across very long time horizons, making them well suited for full game analysis. Our model utilizes the MSC (Macromanagement in StarCraft II) dataset and improves on the top performing gated recurrent unit (GRU) architecture in predicting global state and build order as measured by mean accuracy over multiple time horizons. We present ablation studies on our proposed architecture that support our design decisions. One key advantage of transformers is their ability to generalize well, and we demonstrate that our model achieves an even better accuracy when used in a transfer learning setting in which models trained on games with one racial matchup (e.g., Terran vs. Protoss) are transferred to a different one. We believe that transformers' ability to model long games, potential for parallelization, and generalization performance make them an excellent choice for StarCraft agents.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge