Learning with Proper Partial Labels

Paper and Code

Dec 23, 2021

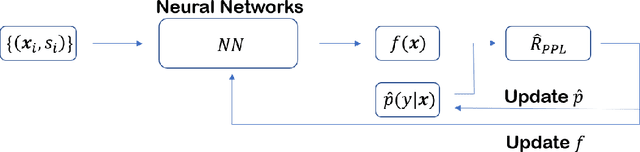

Partial-label learning is a kind of weakly-supervised learning with inexact labels, where for each training example, we are given a set of candidate labels instead of only one true label. Recently, various approaches on partial-label learning have been proposed under different generation models of candidate label sets. However, these methods require relatively strong distributional assumptions on the generation models. When the assumptions do not hold, the performance of the methods is not guaranteed theoretically. In this paper, we propose the notion of properness on partial labels. We show that this proper partial-label learning framework includes many previous partial-label learning settings as special cases. We then derive a unified unbiased estimator of the classification risk. We prove that our estimator is risk-consistent by obtaining its estimation error bound. Finally, we validate the effectiveness of our algorithm through experiments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge