Learning to Label Affordances from Simulated and Real Data

Paper and Code

Sep 26, 2017

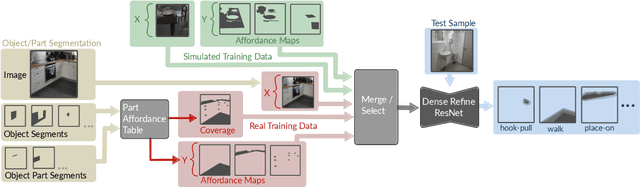

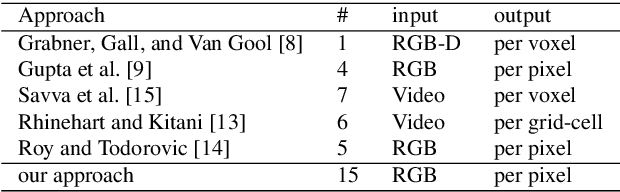

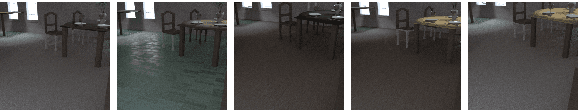

An autonomous robot should be able to evaluate the affordances that are offered by a given situation. Here we address this problem by designing a system that can densely predict affordances given only a single 2D RGB image. This is achieved with a convolutional neural network (ResNet), which we combine with refinement modules recently proposed for addressing semantic image segmentation. We define a novel cost function, which is able to handle (potentially multiple) affordances of objects and their parts in a pixel-wise manner even in the case of incomplete data. We perform qualitative as well as quantitative evaluations with simulated and real data assessing 15 different affordances. In general, we find that affordances, which are well-enough represented in the training data, are correctly recognized with a substantial fraction of correctly assigned pixels. Furthermore, we show that our model outperforms several baselines. Hence, this method can give clear action guidelines for a robot.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge