Learning Sums of Independent Random Variables with Sparse Collective Support

Paper and Code

Jul 18, 2018

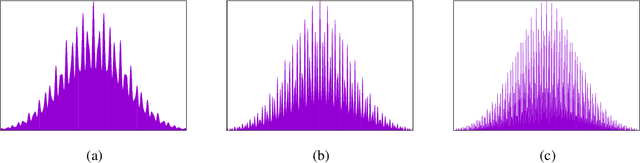

We study the learnability of sums of independent integer random variables given a bound on the size of the union of their supports. For $\mathcal{A} \subset \mathbf{Z}_{+}$, a sum of independent random variables with collective support $\mathcal{A}$} (called an $\mathcal{A}$-sum in this paper) is a distribution $\mathbf{S} = \mathbf{X}_1 + \cdots + \mathbf{X}_N$ where the $\mathbf{X}_i$'s are mutually independent (but not necessarily identically distributed) integer random variables with $\cup_i \mathsf{supp}(\mathbf{X}_i) \subseteq \mathcal{A}.$ We give two main algorithmic results for learning such distributions: 1. For the case $| \mathcal{A} | = 3$, we give an algorithm for learning $\mathcal{A}$-sums to accuracy $\epsilon$ that uses $\mathsf{poly}(1/\epsilon)$ samples and runs in time $\mathsf{poly}(1/\epsilon)$, independent of $N$ and of the elements of $\mathcal{A}$. 2. For an arbitrary constant $k \geq 4$, if $\mathcal{A} = \{ a_1,...,a_k\}$ with $0 \leq a_1 < ... < a_k$, we give an algorithm that uses $\mathsf{poly}(1/\epsilon) \cdot \log \log a_k$ samples (independent of $N$) and runs in time $\mathsf{poly}(1/\epsilon, \log a_k).$ We prove an essentially matching lower bound: if $|\mathcal{A}| = 4$, then any algorithm must use $\Omega(\log \log a_4) $ samples even for learning to constant accuracy. We also give similar-in-spirit (but quantitatively very different) algorithmic results, and essentially matching lower bounds, for the case in which $\mathcal{A}$ is not known to the learner.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge