Learning Language Structures through Grounding

Paper and Code

Jun 14, 2024

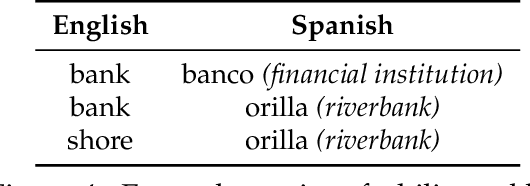

Language is highly structured, with syntactic and semantic structures, to some extent, agreed upon by speakers of the same language. With implicit or explicit awareness of such structures, humans can learn and use language efficiently and generalize to sentences that contain unseen words. Motivated by human language learning, in this dissertation, we consider a family of machine learning tasks that aim to learn language structures through grounding. We seek distant supervision from other data sources (i.e., grounds), including but not limited to other modalities (e.g., vision), execution results of programs, and other languages. We demonstrate the potential of this task formulation and advocate for its adoption through three schemes. In Part I, we consider learning syntactic parses through visual grounding. We propose the task of visually grounded grammar induction, present the first models to induce syntactic structures from visually grounded text and speech, and find that the visual grounding signals can help improve the parsing quality over language-only models. As a side contribution, we propose a novel evaluation metric that enables the evaluation of speech parsing without text or automatic speech recognition systems involved. In Part II, we propose two execution-aware methods to map sentences into corresponding semantic structures (i.e., programs), significantly improving compositional generalization and few-shot program synthesis. In Part III, we propose methods that learn language structures from annotations in other languages. Specifically, we propose a method that sets a new state of the art on cross-lingual word alignment. We then leverage the learned word alignments to improve the performance of zero-shot cross-lingual dependency parsing, by proposing a novel substructure-based projection method that preserves structural knowledge learned from the source language.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge