Learning Blended, Precise Semantic Program Embeddings

Paper and Code

Jul 11, 2019

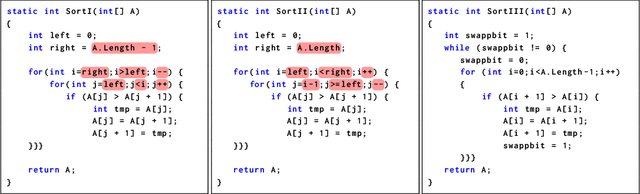

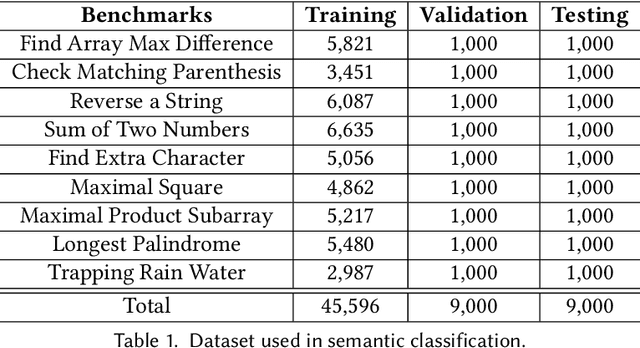

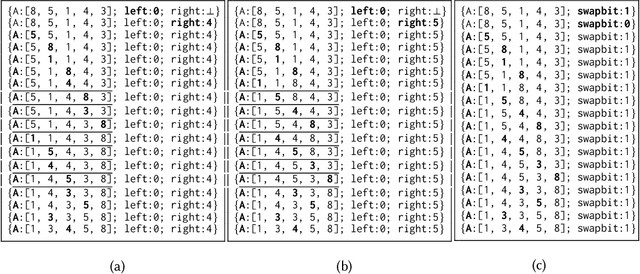

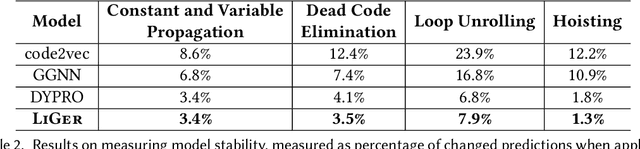

Learning neural program embeddings is key to utilizing deep neural networks in program languages research --- precise and efficient program representations enable the application of deep models to a wide range of program analysis tasks. Existing approaches predominately learn to embed programs from their source code, and, as a result, they do not capture deep, precise program semantics. On the other hand, models learned from runtime information critically depend on the quality of program executions, thus leading to trained models with highly variant quality. This paper tackles these inherent weaknesses of prior approaches by introducing a new deep neural network, \liger, which learns program representations from a mixture of symbolic and concrete execution traces. We have evaluated \liger on \coset, a recently proposed benchmark suite for evaluating neural program embeddings. Results show \liger (1) is significantly more accurate than the state-of-the-art syntax-based models Gated Graph Neural Network and code2vec in classifying program semantics, and (2) requires on average 10x fewer executions covering 74\% fewer paths than the state-of-the-art dynamic model \dypro. Furthermore, we extend \liger to predict the name for a method from its body's vector representation. Learning on the same set of functions (more than 170K in total), \liger significantly outperforms code2seq, the previous state-of-the-art for method name prediction.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge