Leaky ReLUs That Differ in Forward and Backward Pass Facilitate Activation Maximization in Deep Neural Networks

Paper and Code

Oct 22, 2024

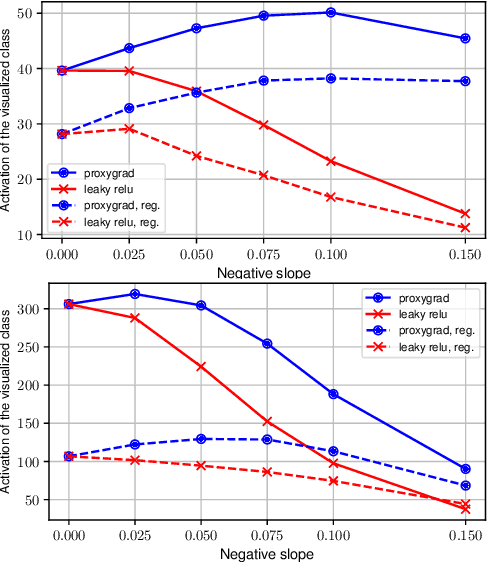

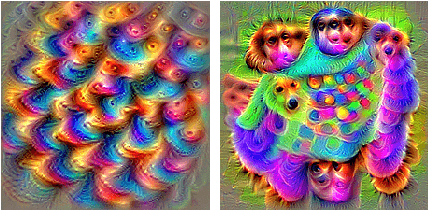

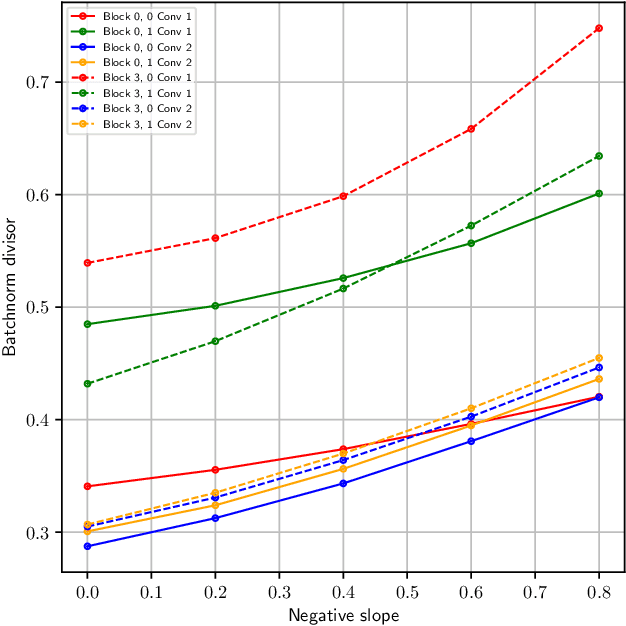

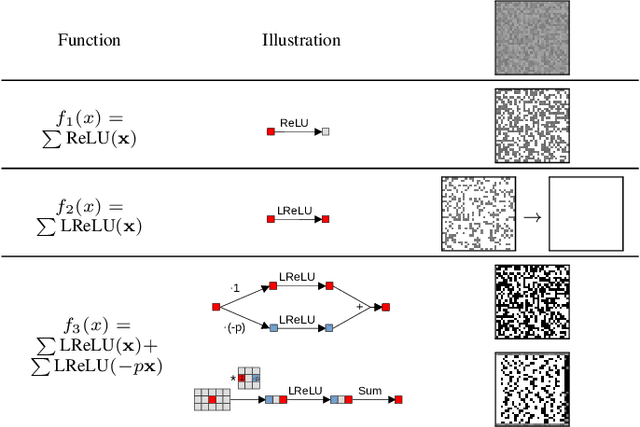

Activation maximization (AM) strives to generate optimal input stimuli, revealing features that trigger high responses in trained deep neural networks. AM is an important method of explainable AI. We demonstrate that AM fails to produce optimal input stimuli for simple functions containing ReLUs or Leaky ReLUs, casting doubt on the practical usefulness of AM and the visual interpretation of the generated images. This paper proposes a solution based on using Leaky ReLUs with a high negative slope in the backward pass while keeping the original, usually zero, slope in the forward pass. The approach significantly increases the maxima found by AM. The resulting ProxyGrad algorithm implements a novel optimization technique for neural networks that employs a secondary network as a proxy for gradient computation. This proxy network is designed to have a simpler loss landscape with fewer local maxima than the original network. Our chosen proxy network is an identical copy of the original network, including its weights, with distinct negative slopes in the Leaky ReLUs. Moreover, we show that ProxyGrad can be used to train the weights of Convolutional Neural Networks for classification such that, on some of the tested benchmarks, they outperform traditional networks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge