Latent-Insensitive autoencoders for Anomaly Detection

Paper and Code

Nov 14, 2021

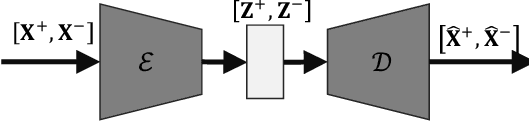

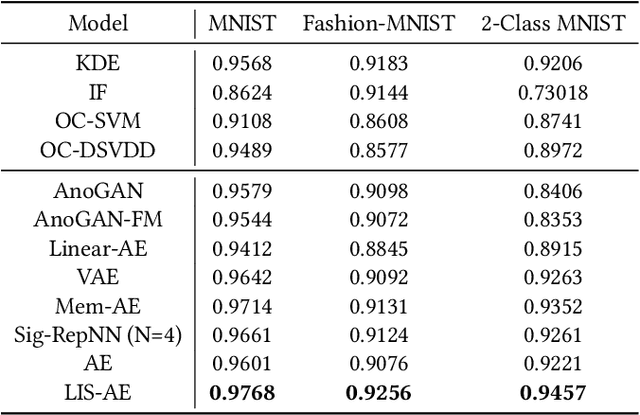

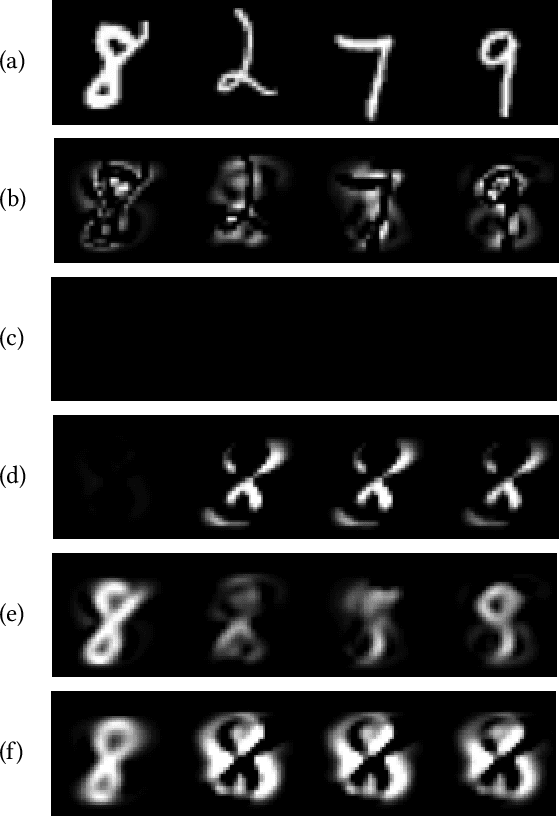

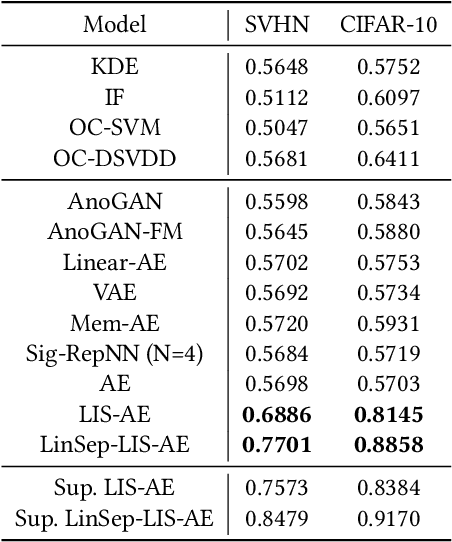

Reconstruction-based approaches to anomaly detection tend to fall short when applied to complex datasets with target classes that possess high inter-class variance. Similar to the idea of self-taught learning used in transfer learning, many domains are rich with similar unlabelled datasets that could be leveraged as a proxy for out-of-distribution samples. In this paper we introduce Latent-Insensitive autoencoder (LIS-AE) where unlabeled data from a similar domain is utilized as negative examples to shape the latent layer (bottleneck) of a regular autoencoder such that it is only capable of reconstructing one task. We provide theoretical justification for the proposed training process and loss functions along with an extensive ablation study highlighting important aspects of our model. We test our model in multiple anomaly detection settings presenting quantitative and qualitative analysis showcasing the significant performance improvement of our model for anomaly detection tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge