Knowledge Fusion Transformers for Video Action Recognition

Paper and Code

Sep 30, 2020

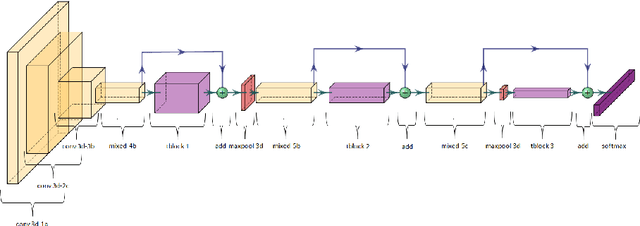

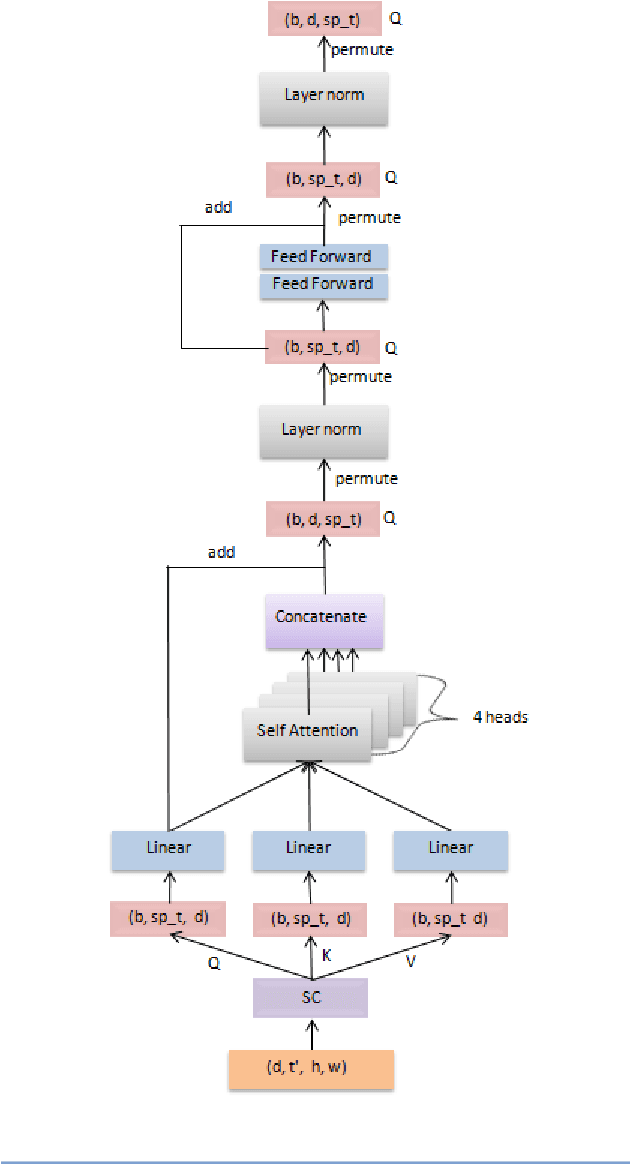

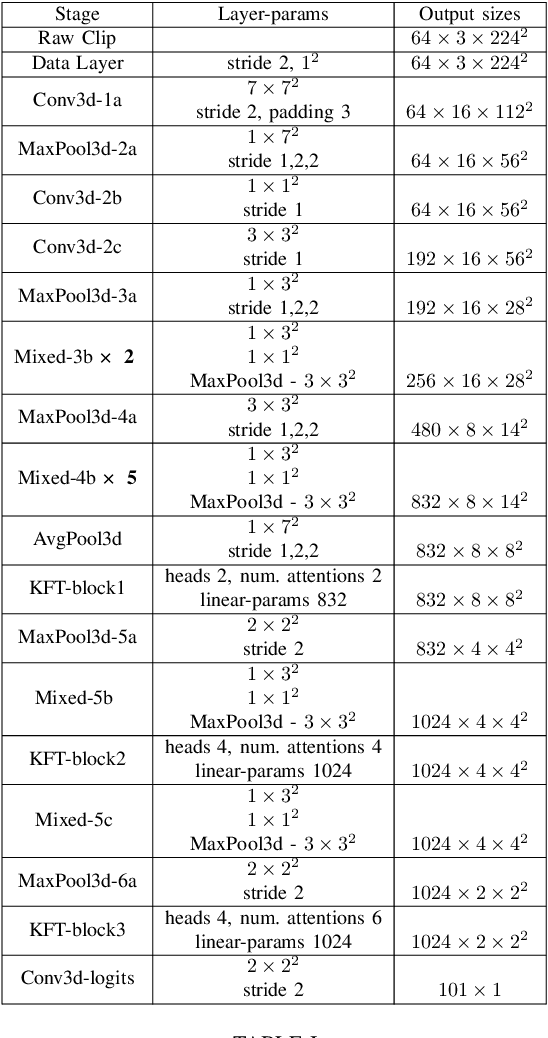

We introduce Knowledge Fusion Transformers for video action classification. We present a self-attention based feature enhancer to fuse action knowledge in 3D inception based spatio-temporal context of the video clip intended to be classified. We show, how using only one stream networks and with little or, no pretraining can pave the way for a performance close to the current state-of-the-art. Additionally, we present how different self-attention architectures used at different levels of the network can be blended-in to enhance feature representation. Our architecture is trained and evaluated on UCF-101 and Charades dataset, where it is competitive with the state of the art. It also exceeds by a large gap from single stream networks with no to less pretraining.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge