Is it morally acceptable for a system to lie to persuade me?

Paper and Code

Apr 15, 2014

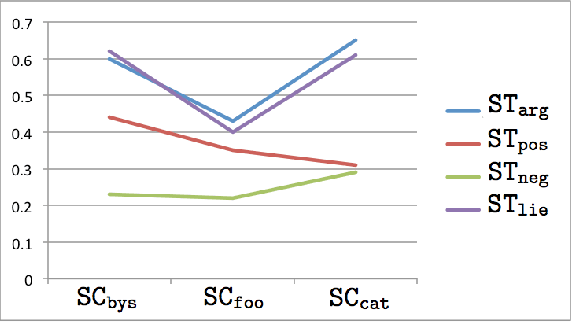

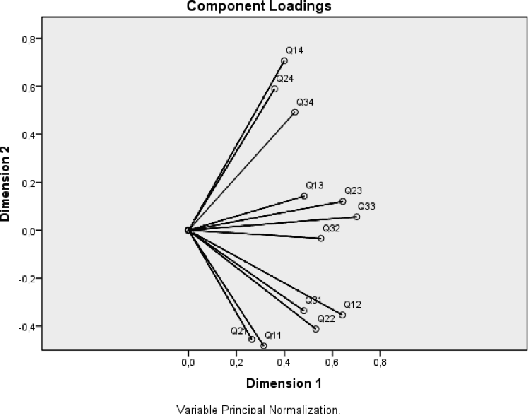

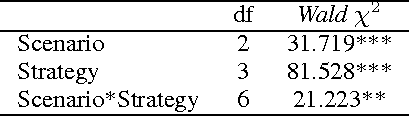

Given the fast rise of increasingly autonomous artificial agents and robots, a key acceptability criterion will be the possible moral implications of their actions. In particular, intelligent persuasive systems (systems designed to influence humans via communication) constitute a highly sensitive topic because of their intrinsically social nature. Still, ethical studies in this area are rare and tend to focus on the output of the required action. Instead, this work focuses on the persuasive acts themselves (e.g. "is it morally acceptable that a machine lies or appeals to the emotions of a person to persuade her, even if for a good end?"). Exploiting a behavioral approach, based on human assessment of moral dilemmas -- i.e. without any prior assumption of underlying ethical theories -- this paper reports on a set of experiments. These experiments address the type of persuader (human or machine), the strategies adopted (purely argumentative, appeal to positive emotions, appeal to negative emotions, lie) and the circumstances. Findings display no differences due to the agent, mild acceptability for persuasion and reveal that truth-conditional reasoning (i.e. argument validity) is a significant dimension affecting subjects' judgment. Some implications for the design of intelligent persuasive systems are discussed.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge