Investigation and Analysis of Hyper and Hypo neuron pruning to selectively update neurons during Unsupervised Adaptation

Paper and Code

Jan 06, 2020

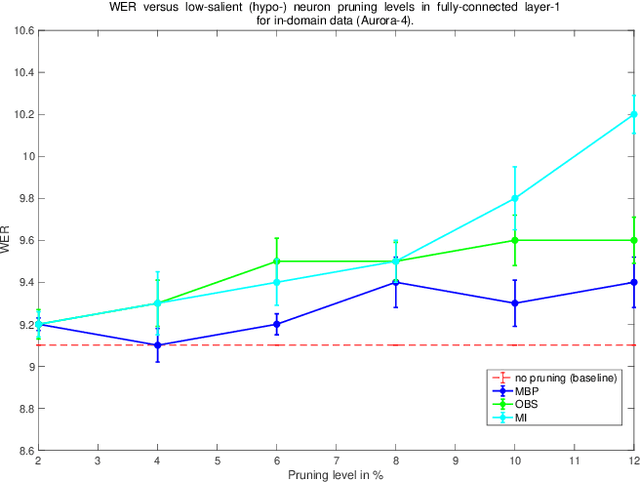

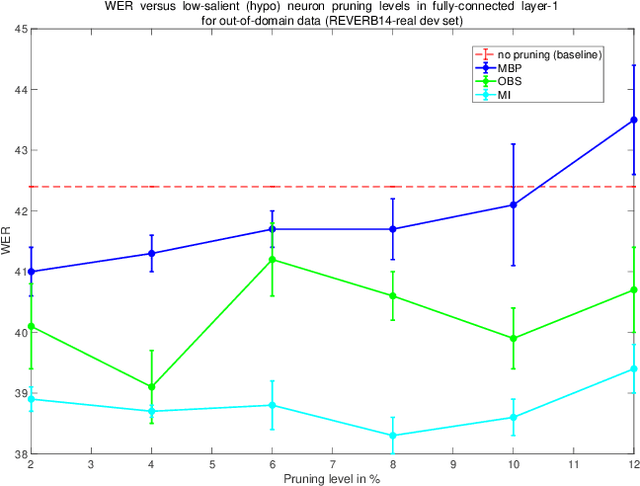

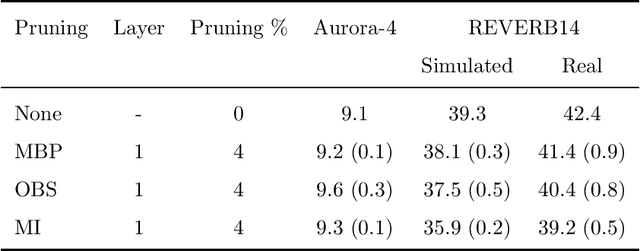

Unseen or out-of-domain data can seriously degrade the performance of a neural network model, indicating the model's failure to generalize to unseen data. Neural net pruning can not only help to reduce a model's size but can improve the model's generalization capacity as well. Pruning approaches look for low-salient neurons that are less contributive to a model's decision and hence can be removed from the model. This work investigates if pruning approaches are successful in detecting neurons that are either high-salient (mostly active or hyper) or low-salient (barely active or hypo), and whether removal of such neurons can help to improve the model's generalization capacity. Traditional blind adaptation techniques update either the whole or a subset of layers, but have never explored selectively updating individual neurons across one or more layers. Focusing on the fully connected layers of a convolutional neural network (CNN), this work shows that it may be possible to selectively adapt certain neurons (consisting of the hyper and the hypo neurons) first, followed by a full-network fine tuning. Using the task of automatic speech recognition, this work demonstrates how the removal of hyper and hypo neurons from a model can improve the model's performance on out-of-domain speech data and how selective neuron adaptation can ensure improved performance when compared to traditional blind model adaptation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge