Interflow: Aggregating Multi-layer Feature Mappings with Attention Mechanism

Paper and Code

Jul 13, 2021

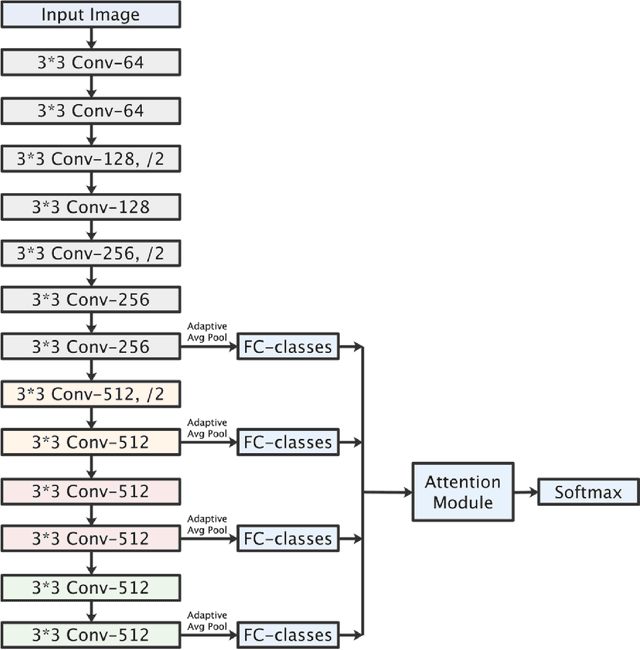

Traditionally, CNN models possess hierarchical structures and utilize the feature mapping of the last layer to obtain the prediction output. However, it can be difficulty to settle the optimal network depth and make the middle layers learn distinguished features. This paper proposes the Interflow algorithm specially for traditional CNN models. Interflow divides CNNs into several stages according to the depth and makes predictions by the feature mappings in each stage. Subsequently, we input these prediction branches into a well-designed attention module, which learns the weights of these prediction branches, aggregates them and obtains the final output. Interflow weights and fuses the features learned in both shallower and deeper layers, making the feature information at each stage processed reasonably and effectively, enabling the middle layers to learn more distinguished features, and enhancing the model representation ability. In addition, Interflow can alleviate gradient vanishing problem, lower the difficulty of network depth selection, and lighten possible over-fitting problem by introducing attention mechanism. Besides, it can avoid network degradation as a byproduct. Compared with the original model, the CNN model with Interflow achieves higher test accuracy on multiple benchmark datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge