Incremental Sense Weight Training for the Interpretation of Contextualized Word Embeddings

Paper and Code

Dec 18, 2019

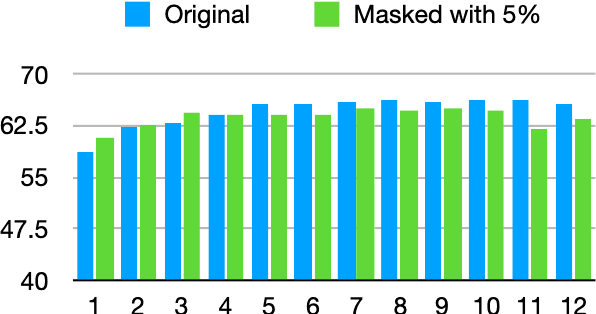

We present a novel online algorithm that learns the essence of each dimension in word embeddings by minimizing the within-group distance of contextualized embedding groups. Three state-of-the-art neural-based language models are used, Flair, ELMo, and BERT, to generate contextualized word embeddings such that different embeddings are generated for the same word type, which are grouped by their senses manually annotated in the SemCor dataset. We hypothesize that not all dimensions are equally important for downstream tasks so that our algorithm can detect unessential dimensions and discard them without hurting the performance. To verify this hypothesis, we first mask dimensions determined unessential by our algorithm, apply the masked word embeddings to a word sense disambiguation task (WSD), and compare its performance against the one achieved by the original embeddings. Several KNN approaches are experimented to establish strong baselines for WSD. Our results show that the masked word embeddings do not hurt the performance and can improve it by 3%. Our work can be used to conduct future research on the interpretability of contextualized embeddings.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge