Improving Sample Efficiency with Normalized RBF Kernels

Paper and Code

Jul 31, 2020

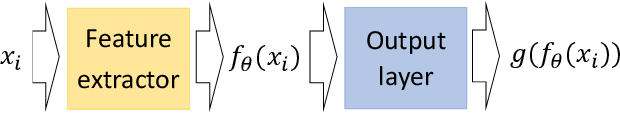

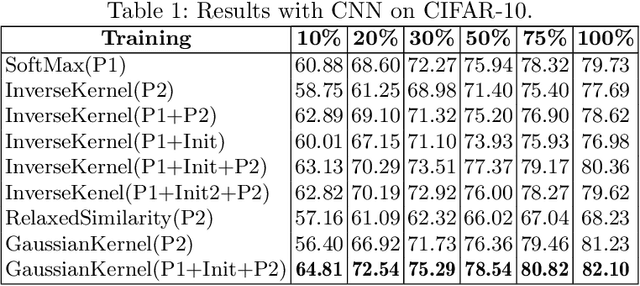

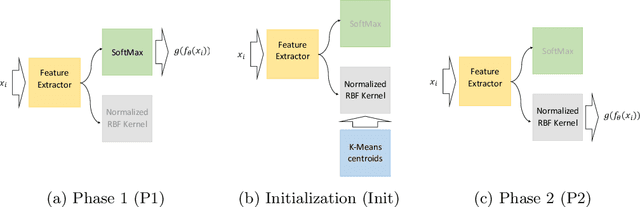

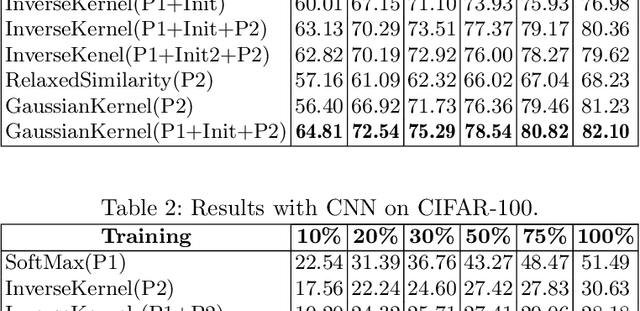

In deep learning models, learning more with less data is becoming more important. This paper explores how neural networks with normalized Radial Basis Function (RBF) kernels can be trained to achieve better sample efficiency. Moreover, we show how this kind of output layer can find embedding spaces where the classes are compact and well-separated. In order to achieve this, we propose a two-phase method to train those type of neural networks on classification tasks. Experiments on CIFAR-10 and CIFAR-100 show that networks with normalized kernels as output layer can achieve higher sample efficiency, high compactness and well-separability through the presented method in comparison to networks with SoftMax output layer.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge