Improved Weighted Random Forest for Classification Problems

Paper and Code

Sep 01, 2020

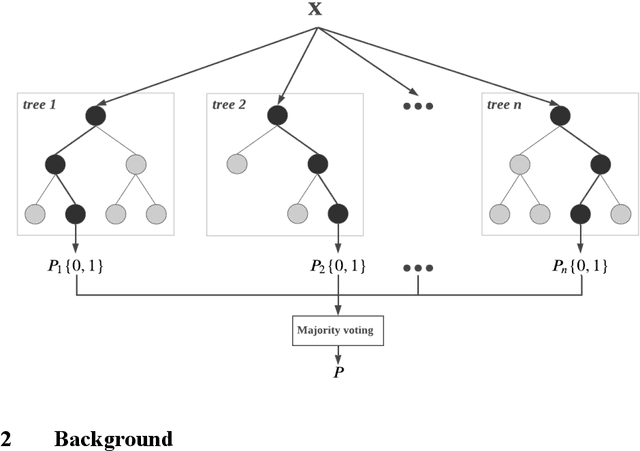

Several studies have shown that combining machine learning models in an appropriate way will introduce improvements in the individual predictions made by the base models. The key to make well-performing ensemble model is in the diversity of the base models. Of the most common solutions for introducing diversity into the decision trees are bagging and random forest. Bagging enhances the diversity by sampling with replacement and generating many training data sets, while random forest adds selecting a random number of features as well. This has made the random forest a winning candidate for many machine learning applications. However, assuming equal weights for all base decision trees does not seem reasonable as the randomization of sampling and input feature selection may lead to different levels of decision-making abilities across base decision trees. Therefore, we propose several algorithms that intend to modify the weighting strategy of regular random forest and consequently make better predictions. The designed weighting frameworks include optimal weighted random forest based on ac-curacy, optimal weighted random forest based on the area under the curve (AUC), performance-based weighted random forest, and several stacking-based weighted random forest models. The numerical results show that the proposed models are able to introduce significant improvements compared to regular random forest.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge