Implementation of Stochastic Quasi-Newton's Method in PyTorch

Paper and Code

May 07, 2018

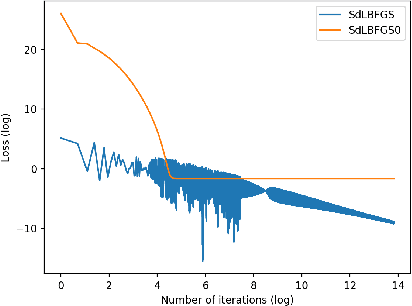

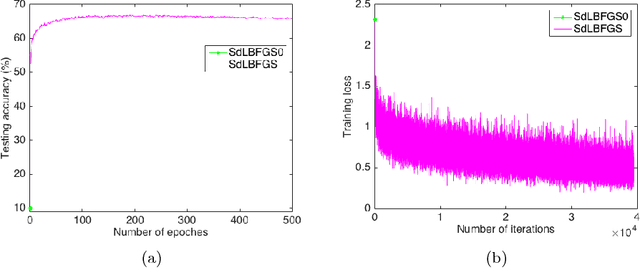

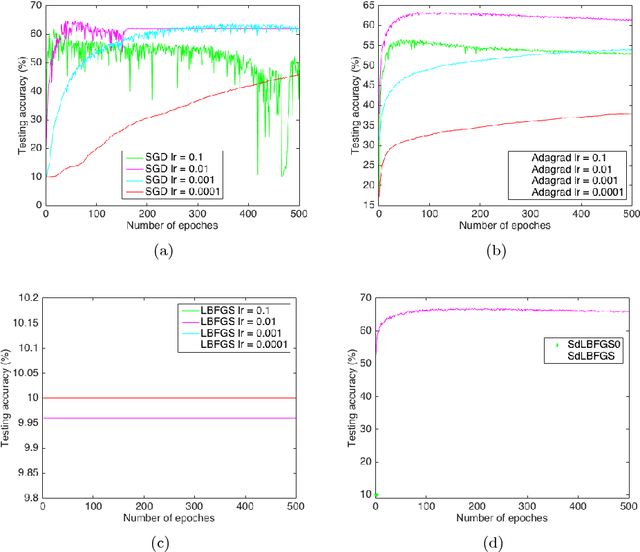

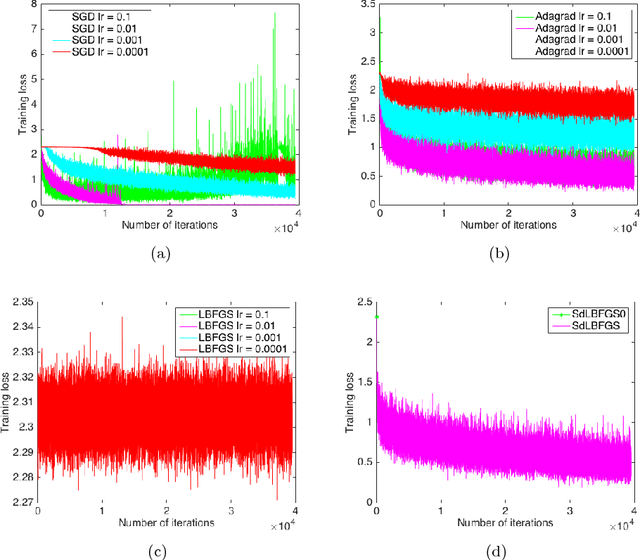

In this paper, we implement the Stochastic Damped LBFGS (SdLBFGS) for stochastic non-convex optimization. We make two important modifications to the original SdLBFGS algorithm. First, by initializing the Hessian at each step using an identity matrix, the algorithm converges better than original algorithm. Second, by performing direction normalization we could gain stable optimization procedure without line search. Experiments on minimizing a 2D non-convex function shows that our improved algorithm converges better than original algorithm, and experiments on the CIFAR10 and MNIST datasets show that our improved algorithm works stably and gives comparable or even better testing accuracies than first order optimizers SGD, Adagrad, and second order optimizers LBFGS in PyTorch.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge