Hybrid RAG-empowered Multi-modal LLM for Secure Healthcare Data Management: A Diffusion-based Contract Theory Approach

Paper and Code

Jul 01, 2024

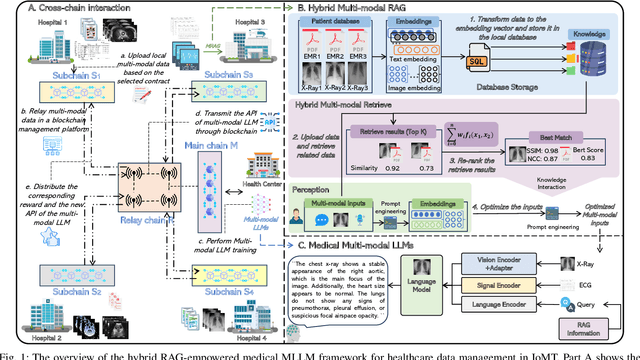

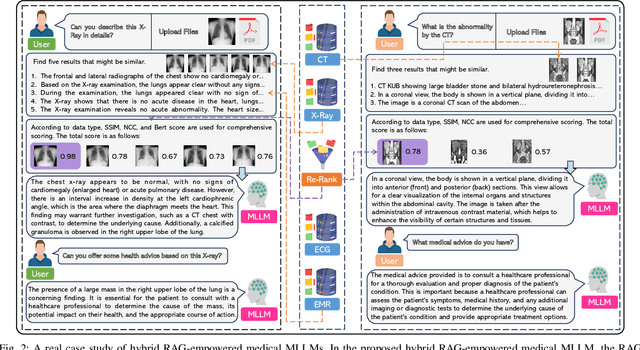

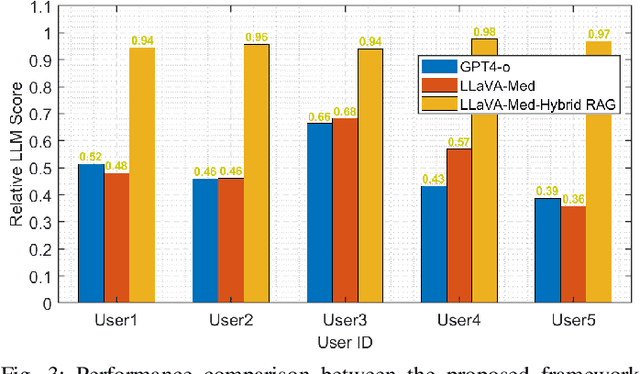

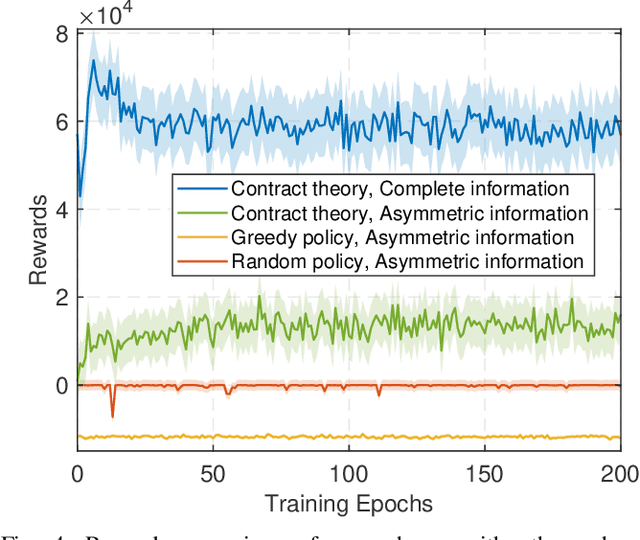

Secure data management and effective data sharing have become paramount in the rapidly evolving healthcare landscape. The advancement of generative artificial intelligence has positioned Multi-modal Large Language Models (MLLMs) as crucial tools for managing healthcare data. MLLMs can support multi-modal inputs and generate diverse types of content by leveraging large-scale training on vast amounts of multi-modal data. However, critical challenges persist in developing medical MLLMs, including healthcare data security and freshness issues, affecting the output quality of MLLMs. In this paper, we propose a hybrid Retrieval-Augmented Generation (RAG)-empowered medical MLLMs framework for healthcare data management. This framework leverages a hierarchical cross-chain architecture to facilitate secure data training. Moreover, it enhances the output quality of MLLMs through hybrid RAG, which employs multi-modal metrics to filter various unimodal RAG results and incorporates these retrieval results as additional inputs to MLLMs. Additionally, we employ age of information to indirectly evaluate the data freshness impact of MLLMs and utilize contract theory to incentivize healthcare data holders to share fresh data, mitigating information asymmetry in data sharing. Finally, we utilize a generative diffusion model-based reinforcement learning algorithm to identify the optimal contract for efficient data sharing. Numerical results demonstrate the effectiveness of the proposed schemes, which achieve secure and efficient healthcare data management.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge