Human Activity Recognition for Edge Devices

Paper and Code

Mar 18, 2019

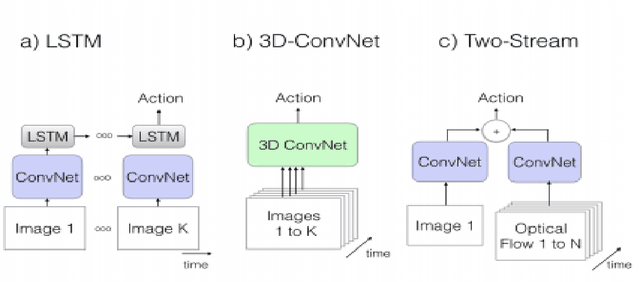

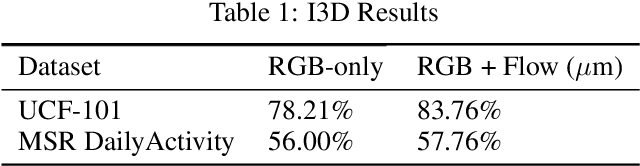

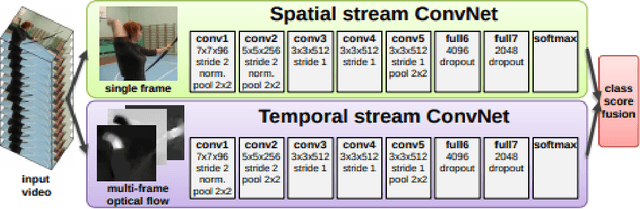

Video activity Recognition has recently gained a lot of momentum with the release of massive Kinetics (400 and 600) data. Architectures such as I3D and C3D networks have shown state-of-the-art performances for activity recognition. The one major pitfall with these state-of-the-art networks is that they require a lot of compute. In this paper we explore how we can achieve comparable results to these state-of-the-art networks for devices-on-edge. We primarily explore two architectures - I3D and Temporal Segment Network. We show that comparable results can be achieved using one tenth the memory usage by changing the testing procedure. We also report our results on Resnet architecture as our backbone apart from the original Inception architecture. Specifically, we achieve 84.54\% top-1 accuracy on UCF-101 dataset using only RGB frames.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge