Higher Order Generalization Error for First Order Discretization of Langevin Diffusion

Paper and Code

Feb 11, 2021

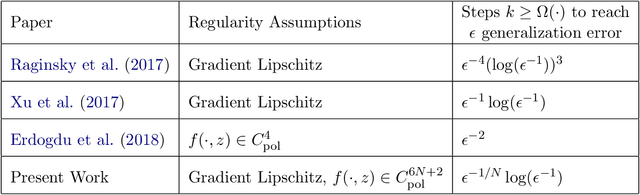

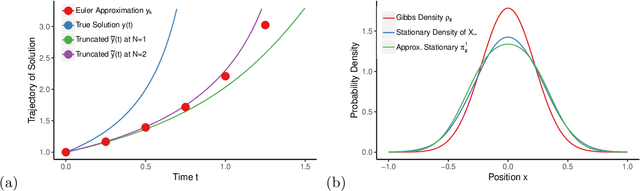

We propose a novel approach to analyze generalization error for discretizations of Langevin diffusion, such as the stochastic gradient Langevin dynamics (SGLD). For an $\epsilon$ tolerance of expected generalization error, it is known that a first order discretization can reach this target if we run $\Omega(\epsilon^{-1} \log (\epsilon^{-1}) )$ iterations with $\Omega(\epsilon^{-1})$ samples. In this article, we show that with additional smoothness assumptions, even first order methods can achieve arbitrarily runtime complexity. More precisely, for each $N>0$, we provide a sufficient smoothness condition on the loss function such that a first order discretization can reach $\epsilon$ expected generalization error given $\Omega( \epsilon^{-1/N} \log (\epsilon^{-1}) )$ iterations with $\Omega(\epsilon^{-1})$ samples.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge