Heuristical Comparison of Vision Transformers Against Convolutional Neural Networks for Semantic Segmentation on Remote Sensing Imagery

Paper and Code

Nov 14, 2024

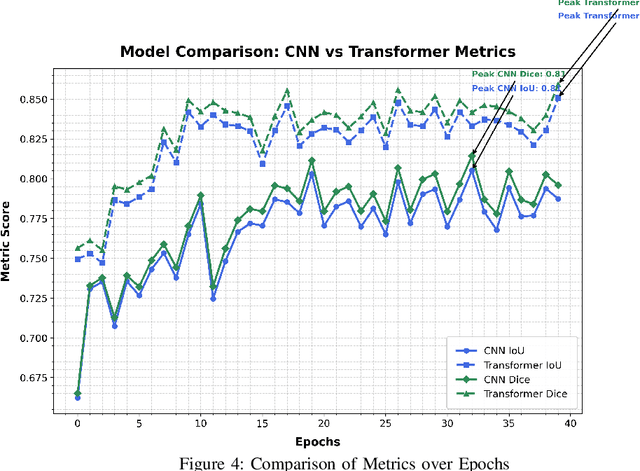

Vision Transformers (ViT) have recently brought a new wave of research in the field of computer vision. These models have done particularly well in the field of image classification and segmentation. Research on semantic and instance segmentation has emerged to accelerate with the inception of the new architecture, with over 80\% of the top 20 benchmarks for the iSAID dataset being either based on the ViT architecture or the attention mechanism behind its success. This paper focuses on the heuristic comparison of three key factors of using (or not using) ViT for semantic segmentation of remote sensing aerial images on the iSAID. The experimental results observed during the course of the research were under the scrutinization of the following objectives: 1. Use of weighted fused loss function for the maximum mean Intersection over Union (mIoU) score, Dice score, and minimization or conservation of entropy or class representation, 2. Comparison of transfer learning on Meta's MaskFormer, a ViT-based semantic segmentation model, against generic UNet Convolutional Neural Networks (CNNs) judged over mIoU, Dice scores, training efficiency, and inference time, and 3. What do we lose for what we gain? i.e., the comparison of the two models against current state-of-art segmentation models. We show the use of the novel combined weighted loss function significantly boosts the CNN model's performance capacities as compared to transfer learning the ViT. The code for this implementation can be found on \url{https://github.com/ashimdahal/ViT-vs-CNN-ImageSegmentation}.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge