Harmonization and Evaluation; Tweaking the Parameters on Human Listeners

Paper and Code

Aug 31, 2022

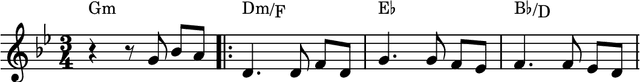

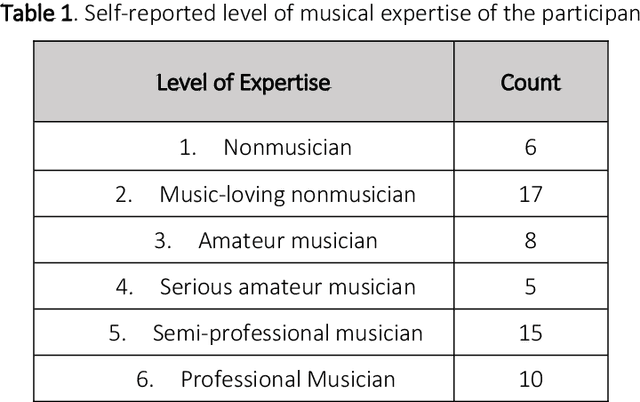

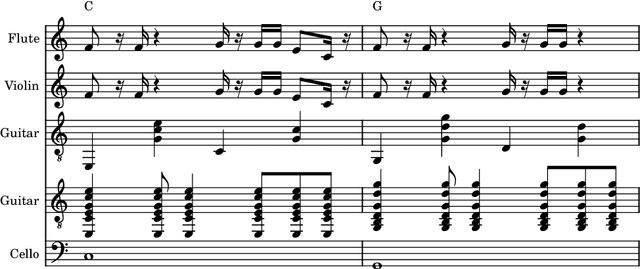

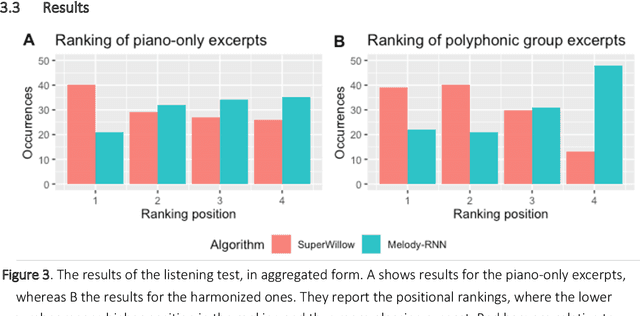

Kansei models were used to study the connotative meaning of music. In multimedia and mixed reality, automatically generated melodies are increasingly being used. It is important to consider whether and what feelings are communicated by this music. Evaluation of computer-generated melodies is not a trivial task. Considered the difficulty of defining useful quantitative metrics of the quality of a generated musical piece, researchers often resort to human evaluation. In these evaluations, often the judges are required to evaluate a set of generated pieces along with some benchmark pieces. The latter are often composed by humans. While this kind of evaluation is relatively common, it is known that care should be taken when designing the experiment, as humans can be influenced by a variety of factors. In this paper, we examine the impact of the presence of harmony in audio files that judges must evaluate, to see whether having an accompaniment can change the evaluation of generated melodies. To do so, we generate melodies with two different algorithms and harmonize them with an automatic tool that we designed for this experiment, and ask more than sixty participants to evaluate the melodies. By using statistical analyses, we show harmonization does impact the evaluation process, by emphasizing the differences among judgements.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge