Growing Artificial Neural Networks

Paper and Code

Jun 11, 2020

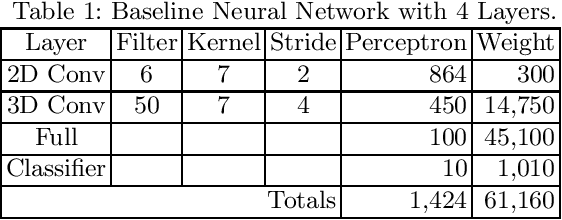

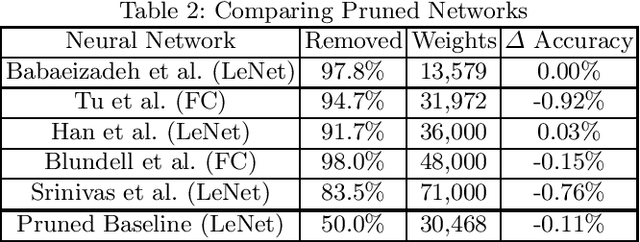

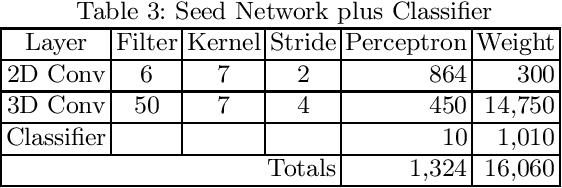

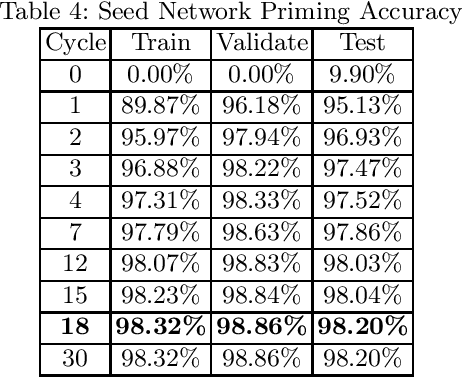

Pruning is a legitimate method for reducing the size of a neural network to fit in low SWaP hardware, but the networks must be trained and pruned offline. We propose an algorithm, Artificial Neurogenesis (ANG), that grows rather than prunes the network and enables neural networks to be trained and executed in low SWaP embedded hardware. ANG accomplishes this by using the training data to determine critical connections between layers before the actual training takes place. Our experiments use a modified LeNet-5 as a baseline neural network that achieves a test accuracy of 98.74% using a total of 61,160 weights. An ANG grown network achieves a test accuracy of 98.80% with only 21,211 weights.

* 14 pages, Accepted for publication in Springer Nature - Book Series:

Transactions on Computational Science and Computational Intelligence,

Advances in Artificial Intelligence and Applied Cognitive Computing -

Springer ID: 89066307 (Book ID: 495585_1_En)

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge