Gradient Norm Regularization Second-Order Algorithms for Solving Nonconvex-Strongly Concave Minimax Problems

Paper and Code

Nov 24, 2024

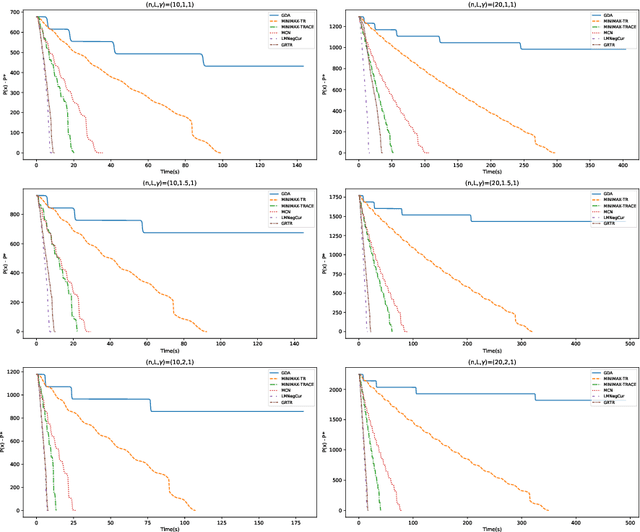

In this paper, we study second-order algorithms for solving nonconvex-strongly concave minimax problems, which have attracted much attention in recent years in many fields, especially in machine learning. We propose a gradient norm regularized trust region (GRTR) algorithm to solve nonconvex-strongly concave minimax problems, where the objective function of the trust region subproblem in each iteration uses a regularized version of the Hessian matrix, and the regularization coefficient and the radius of the ball constraint are proportional to the square root of the gradient norm. The iteration complexity of the proposed GRTR algorithm to obtain an $\mathcal{O}(\epsilon,\sqrt{\epsilon})$-second-order stationary point is proved to be upper bounded by $\tilde{\mathcal{O}}(\rho^{0.5}\kappa^{1.5}\epsilon^{-3/2})$, where $\rho$ and $\kappa$ are the Lipschitz constant of the Jacobian matrix and the condition number of the objective function respectively, which matches the best known iteration complexity of second-order methods for solving nonconvex-strongly concave minimax problems. We further propose a Levenberg-Marquardt algorithm with a gradient norm regularization coefficient and use the negative curvature direction to correct the iteration direction (LMNegCur), which does not need to solve the trust region subproblem at each iteration. We also prove that the LMNegCur algorithm achieves an $\mathcal{O}(\epsilon,\sqrt{\epsilon})$-second-order stationary point within $\tilde{\mathcal{O}}(\rho^{0.5}\kappa^{1.5}\epsilon^{-3/2})$ number of iterations. Numerical results show the efficiency of both proposed algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge