Geometric Decomposition of Feed Forward Neural Networks

Paper and Code

Dec 08, 2016

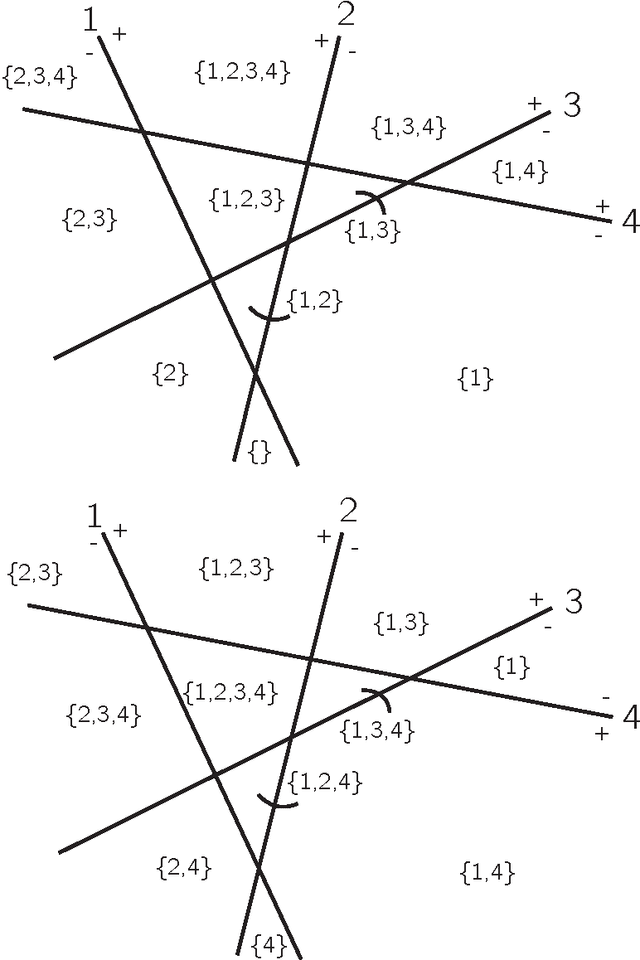

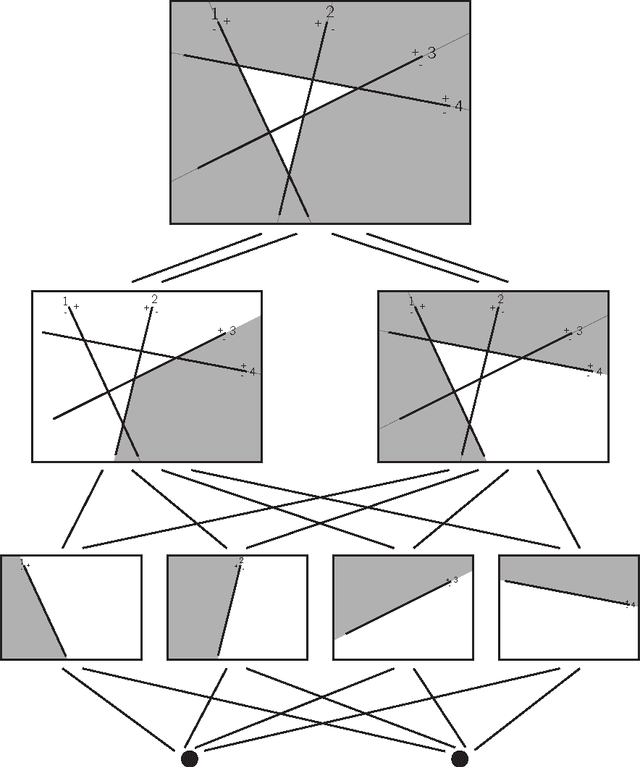

There have been several attempts to mathematically understand neural networks and many more from biological and computational perspectives. The field has exploded in the last decade, yet neural networks are still treated much like a black box. In this work we describe a structure that is inherent to a feed forward neural network. This will provide a framework for future work on neural networks to improve training algorithms, compute the homology of the network, and other applications. Our approach takes a more geometric point of view and is unlike other attempts to mathematically understand neural networks that rely on a functional perspective.

* 11 pages, 2 figures

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge