Generative Adversarial Networks in Human Emotion Synthesis:A Review

Paper and Code

Nov 07, 2020

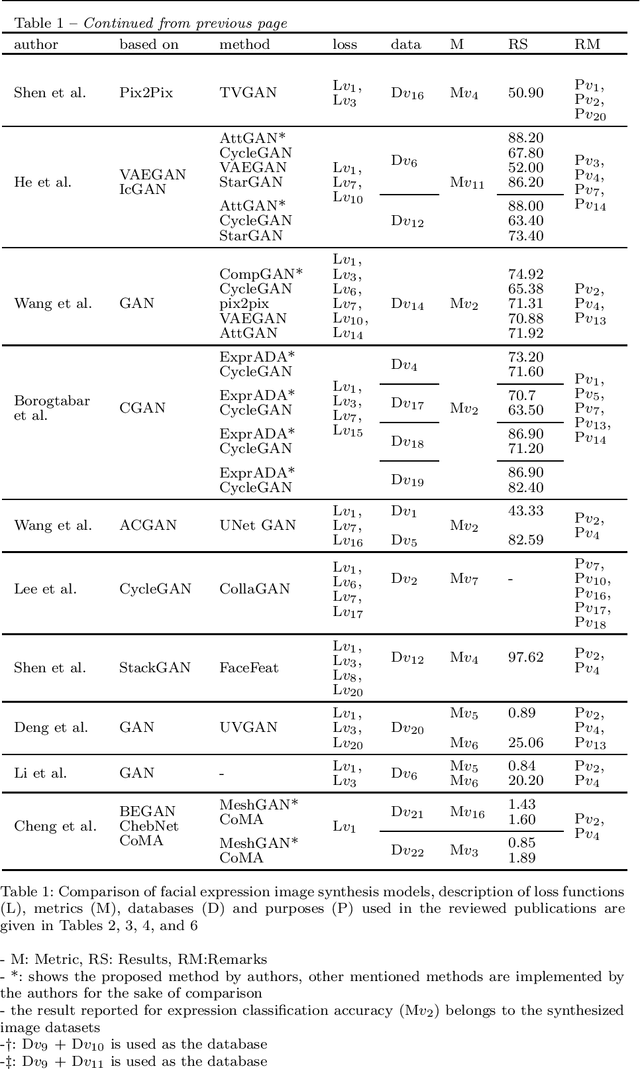

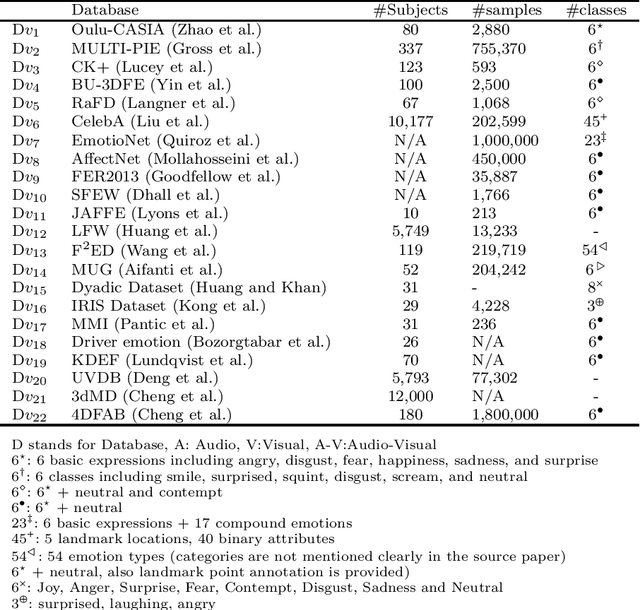

Synthesizing realistic data samples is of great value for both academic and industrial communities. Deep generative models have become an emerging topic in various research areas like computer vision and signal processing. Affective computing, a topic of a broad interest in computer vision society, has been no exception and has benefited from generative models. In fact, affective computing observed a rapid derivation of generative models during the last two decades. Applications of such models include but are not limited to emotion recognition and classification, unimodal emotion synthesis, and cross-modal emotion synthesis. As a result, we conducted a review of recent advances in human emotion synthesis by studying available databases, advantages, and disadvantages of the generative models along with the related training strategies considering two principal human communication modalities, namely audio and video. In this context, facial expression synthesis, speech emotion synthesis, and the audio-visual (cross-modal) emotion synthesis is reviewed extensively under different application scenarios. Gradually, we discuss open research problems to push the boundaries of this research area for future works.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge