General Framework for Reversible Data Hiding in Texts Based on Masked Language Modeling

Paper and Code

Jun 21, 2022

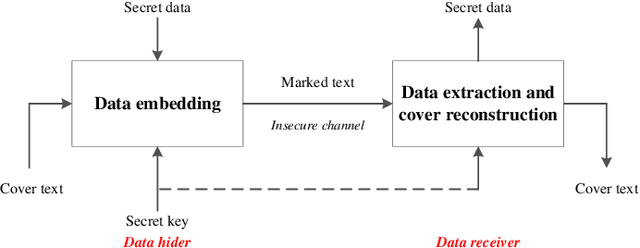

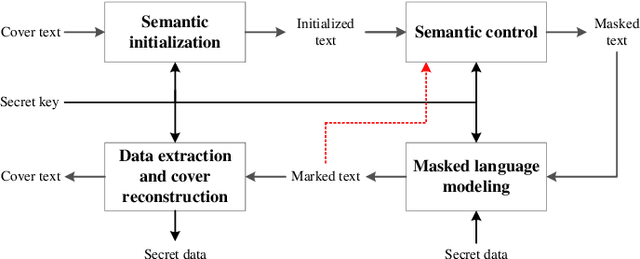

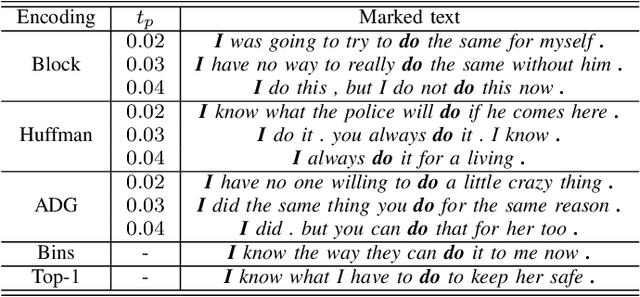

With the fast development of natural language processing, recent advances in information hiding focus on covertly embedding secret information into texts. These algorithms either modify a given cover text or directly generate a text containing secret information, which, however, are not reversible, meaning that the original text not carrying secret information cannot be perfectly recovered unless much side information are shared in advance. To tackle with this problem, in this paper, we propose a general framework to embed secret information into a given cover text, for which the embedded information and the original cover text can be perfectly retrieved from the marked text. The main idea of the proposed method is to use a masked language model to generate such a marked text that the cover text can be reconstructed by collecting the words of some positions and the words of the other positions can be processed to extract the secret information. Our results show that the original cover text and the secret information can be successfully embedded and extracted. Meanwhile, the marked text carrying secret information has good fluency and semantic quality, indicating that the proposed method has satisfactory security, which has been verified by experimental results. Furthermore, there is no need for the data hider and data receiver to share the language model, which significantly reduces the side information and thus has good potential in applications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge