FPGA Implementation of Convolutional Neural Networks with Fixed-Point Calculations

Paper and Code

Aug 29, 2018

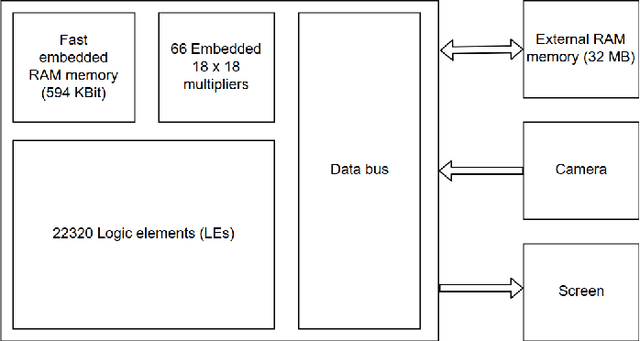

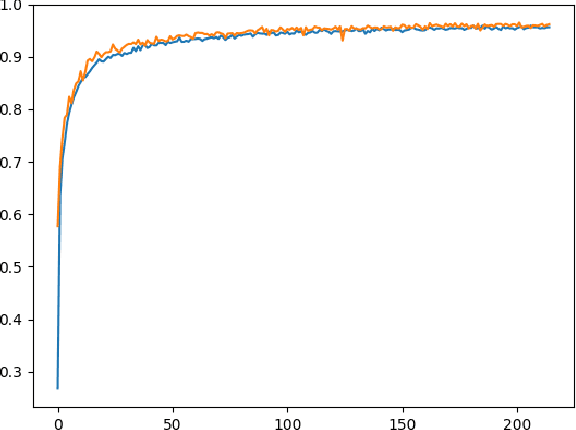

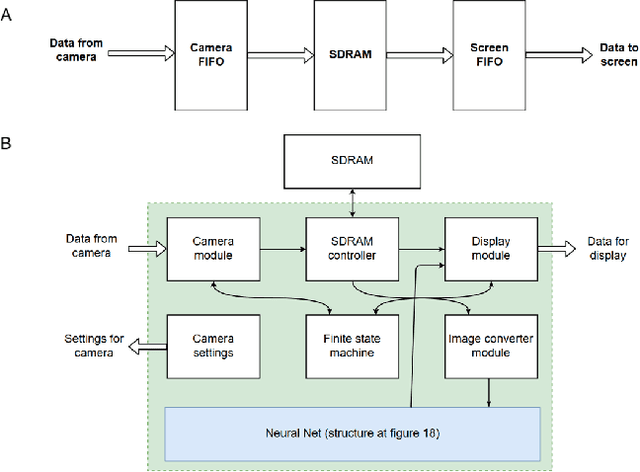

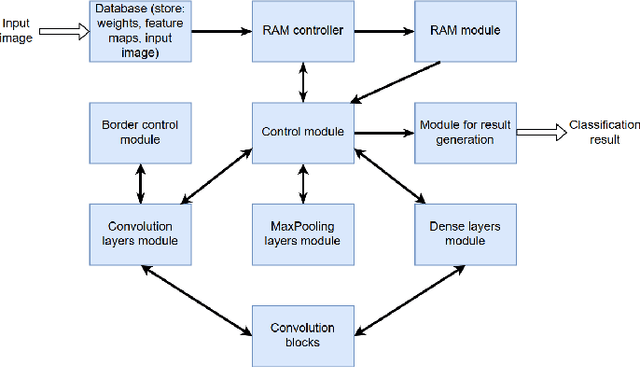

Neural network-based methods for image processing are becoming widely used in practical applications. Modern neural networks are computationally expensive and require specialized hardware, such as graphics processing units. Since such hardware is not always available in real life applications, there is a compelling need for the design of neural networks for mobile devices. Mobile neural networks typically have reduced number of parameters and require a relatively small number of arithmetic operations. However, they usually still are executed at the software level and use floating-point calculations. The use of mobile networks without further optimization may not provide sufficient performance when high processing speed is required, for example, in real-time video processing (30 frames per second). In this study, we suggest optimizations to speed up computations in order to efficiently use already trained neural networks on a mobile device. Specifically, we propose an approach for speeding up neural networks by moving computation from software to hardware and by using fixed-point calculations instead of floating-point. We propose a number of methods for neural network architecture design to improve the performance with fixed-point calculations. We also show an example of how existing datasets can be modified and adapted for the recognition task in hand. Finally, we present the design and the implementation of a floating-point gate array-based device to solve the practical problem of real-time handwritten digit classification from mobile camera video feed.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge