FPGA-based AI Smart NICs for Scalable Distributed AI Training Systems

Paper and Code

Apr 22, 2022

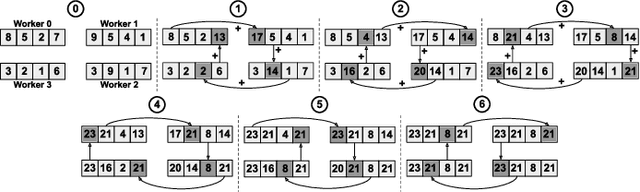

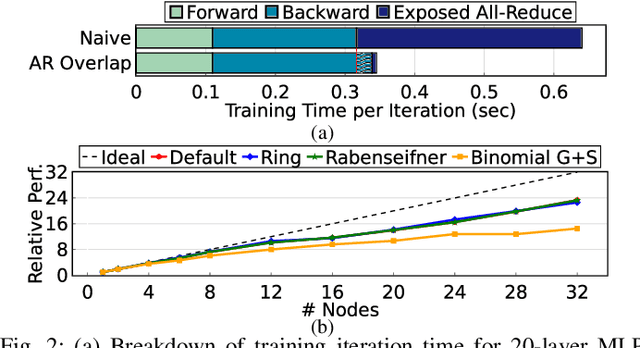

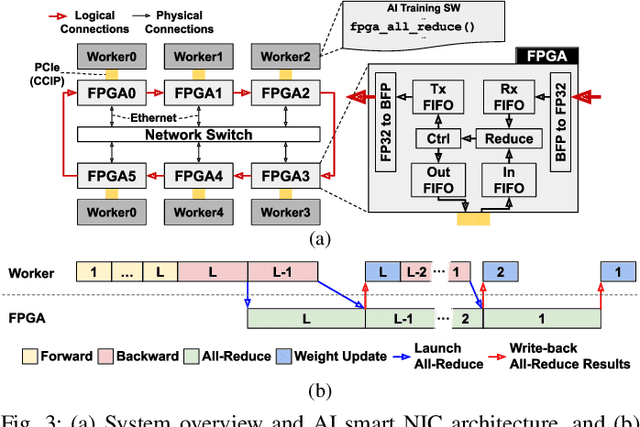

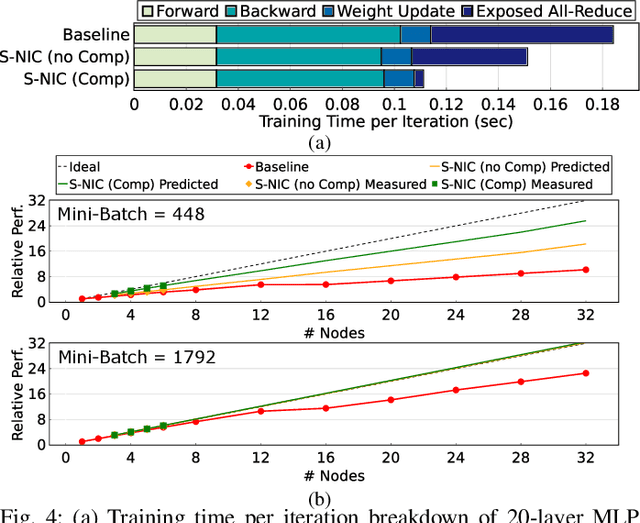

Rapid advances in artificial intelligence (AI) technology have led to significant accuracy improvements in a myriad of application domains at the cost of larger and more compute-intensive models. Training such models on massive amounts of data typically requires scaling to many compute nodes and relies heavily on collective communication algorithms, such as all-reduce, to exchange the weight gradients between different nodes. The overhead of these collective communication operations in a distributed AI training system can bottleneck its performance, with more pronounced effects as the number of nodes increases. In this paper, we first characterize the all-reduce operation overhead by profiling distributed AI training. Then, we propose a new smart network interface card (NIC) for distributed AI training systems using field-programmable gate arrays (FPGAs) to accelerate all-reduce operations and optimize network bandwidth utilization via data compression. The AI smart NIC frees up the system's compute resources to perform the more compute-intensive tensor operations and increases the overall node-to-node communication efficiency. We perform real measurements on a prototype distributed AI training system comprised of 6 compute nodes to evaluate the performance gains of our proposed FPGA-based AI smart NIC compared to a baseline system with regular NICs. We also use these measurements to validate an analytical model that we formulate to predict performance when scaling to larger systems. Our proposed FPGA-based AI smart NIC enhances overall training performance by 1.6x at 6 nodes, with an estimated 2.5x performance improvement at 32 nodes, compared to the baseline system using conventional NICs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge