FlingBot: The Unreasonable Effectiveness of Dynamic Manipulation for Cloth Unfolding

Paper and Code

May 08, 2021

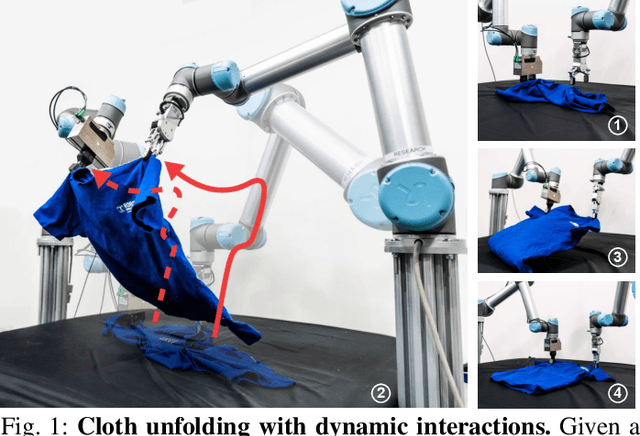

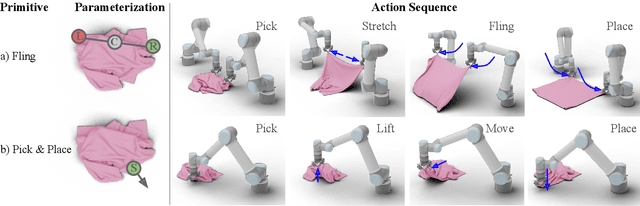

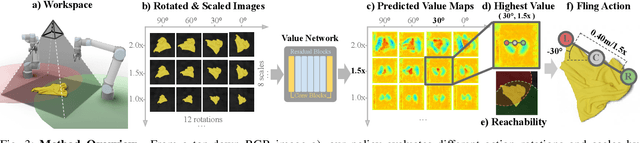

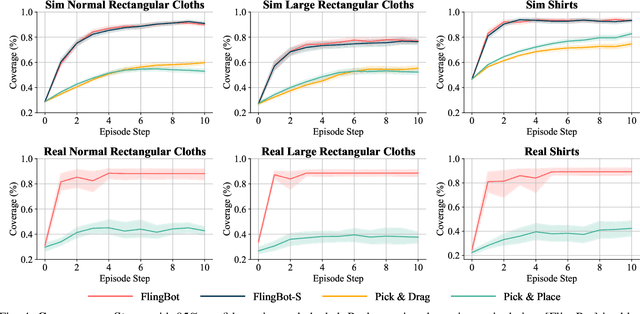

High-velocity dynamic actions (e.g., fling or throw) play a crucial role in our every-day interaction with deformable objects by improving our efficiency and effectively expanding our physical reach range. Yet, most prior works have tackled cloth manipulation using exclusively single-arm quasi-static actions, which requires a large number of interactions for challenging initial cloth configurations and strictly limits the maximum cloth size by the robot's reach range. In this work, we demonstrate the effectiveness of dynamic flinging actions for cloth unfolding. We propose a self-supervised learning framework, FlingBot, that learns how to unfold a piece of fabric from arbitrary initial configurations using a pick, stretch, and fling primitive for a dual-arm setup from visual observations. The final system achieves over 80\% coverage within 3 actions on novel cloths, can unfold cloths larger than the system's reach range, and generalizes to T-shirts despite being trained on only rectangular cloths. We also finetuned FlingBot on a real-world dual-arm robot platform, where it increased the cloth coverage 3.6 times more than the quasi-static baseline did. The simplicity of FlingBot combined with its superior performance over quasi-static baselines demonstrates the effectiveness of dynamic actions for deformable object manipulation. The project video is available at $\href{https://youtu.be/T4tDy5y_6ZM}{here}$.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge