Finding the Winning Ticket of BERT for Binary Text Classification via Adaptive Layer Truncation before Fine-tuning

Paper and Code

Nov 22, 2021

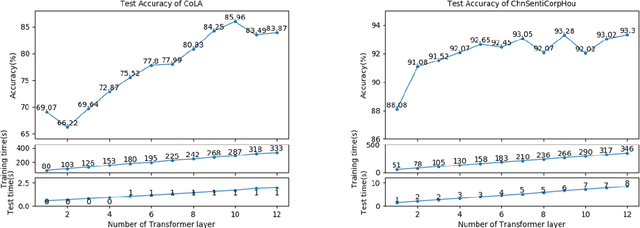

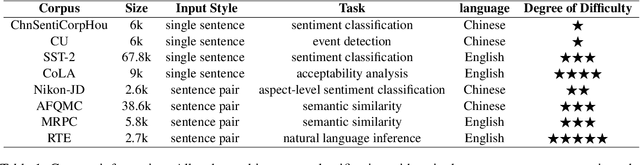

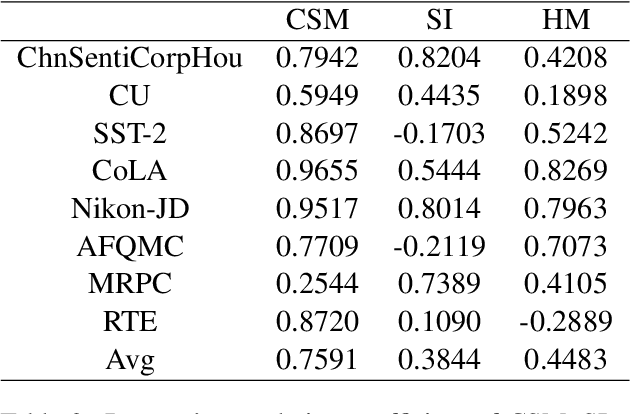

In light of the success of transferring language models into NLP tasks, we ask whether the full BERT model is always the best and does it exist a simple but effective method to find the winning ticket in state-of-the-art deep neural networks without complex calculations. We construct a series of BERT-based models with different size and compare their predictions on 8 binary classification tasks. The results show there truly exist smaller sub-networks performing better than the full model. Then we present a further study and propose a simple method to shrink BERT appropriately before fine-tuning. Some extended experiments indicate that our method could save time and storage overhead extraordinarily with little even no accuracy loss.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge