FiMReSt: Finite Mixture of Multivariate Regulated Skew-t Kernels -- A Flexible Probabilistic Model for Multi-Clustered Data with Asymmetrically-Scattered Non-Gaussian Kernels

Paper and Code

May 15, 2023

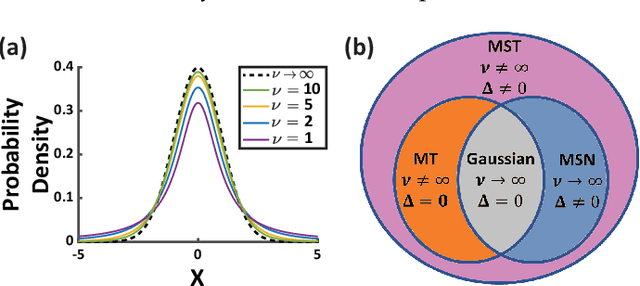

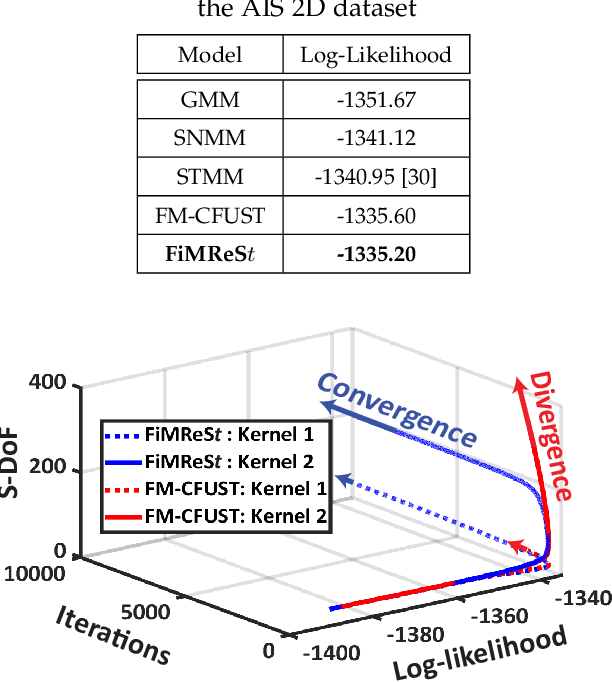

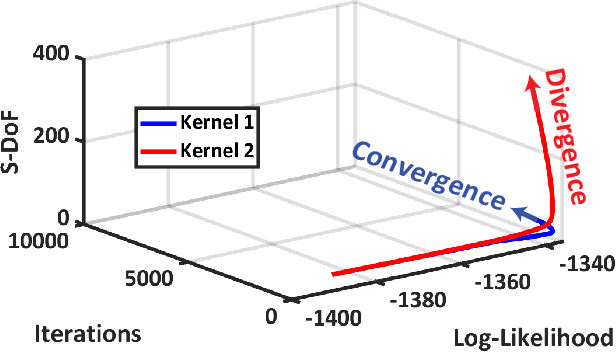

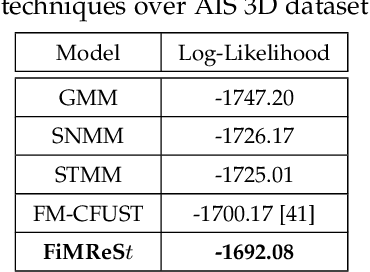

Recently skew-t mixture models have been introduced as a flexible probabilistic modeling technique taking into account both skewness in data clusters and the statistical degree of freedom (S-DoF) to improve modeling generalizability, and robustness to heavy tails and skewness. In this paper, we show that the state-of-the-art skew-t mixture models fundamentally suffer from a hidden phenomenon named here as "S-DoF explosion," which results in local minima in the shapes of normal kernels during the non-convex iterative process of expectation maximization. For the first time, this paper provides insights into the instability of the S-DoF, which can result in the divergence of the kernels from the mixture of t-distribution, losing generalizability and power for modeling the outliers. Thus, in this paper, we propose a regularized iterative optimization process to train the mixture model, enhancing the generalizability and resiliency of the technique. The resulting mixture model is named Finite Mixture of Multivariate Regulated Skew-t (FiMReSt) Kernels, which stabilizes the S-DoF profile during optimization process of learning. To validate the performance, we have conducted a comprehensive experiment on several real-world datasets and a synthetic dataset. The results highlight (a) superior performance of the FiMReSt, (b) generalizability in the presence of outliers, and (c) convergence of S-DoF.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge