Faster k-Medoids Clustering: Improving the PAM, CLARA, and CLARANS Algorithms

Paper and Code

Oct 12, 2018

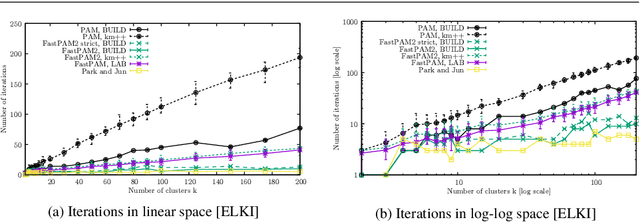

Clustering non-Euclidean data is difficult, and one of the most used algorithms besides hierarchical clustering is the popular algorithm PAM, partitioning around medoids, also known as k-medoids. In Euclidean geometry the mean--as used in k-means--is a good estimator for the cluster center, but this does not hold for arbitrary dissimilarities. PAM uses the medoid instead, the object with the smallest dissimilarity to all others in the cluster. This notion of centrality can be used with any (dis-)similarity, and thus is of high relevance to many domains such as biology that require the use of Jaccard, Gower, or even more complex distances. A key issue with PAM is, however, its high run time cost. In this paper, we propose modifications to the PAM algorithm where at the cost of storing O(k) additional values, we can achieve an O(k)-fold speedup in the second ("SWAP") phase of the algorithm, but will still find the same results as the original PAM algorithm. If we slightly relax the choice of swaps performed (while retaining comparable quality), we can further accelerate the algorithm by performing up to k swaps in each iteration. We also show how the CLARA and CLARANS algorithms benefit from this modification. In experiments on real data with k=100, we observed a 200 fold speedup compared to the original PAM SWAP algorithm, making PAM applicable to larger data sets, and in particular to higher k.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge