Faster First-Order Algorithms for Monotone Strongly DR-Submodular Maximization

Paper and Code

Nov 15, 2021

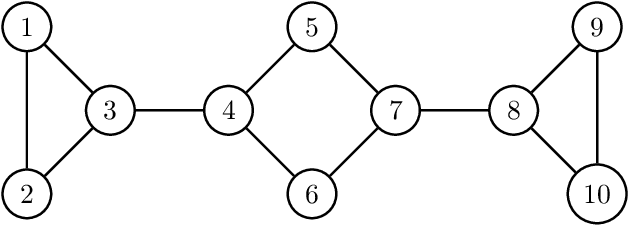

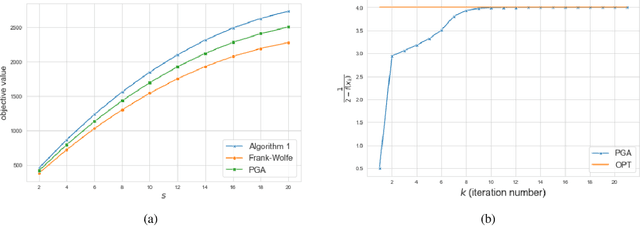

Continuous DR-submodular functions are a class of generally non-convex/non-concave functions that satisfy the Diminishing Returns (DR) property, which implies that they are concave along non-negative directions. Existing work has studied monotone continuous DR-submodular maximization subject to a convex constraint and provided efficient algorithms with approximation guarantees. In many applications, such as computing the stability number of a graph, the monotone DR-submodular objective function has the additional property of being strongly concave along non-negative directions (i.e., strongly DR-submodular). In this paper, we consider a subclass of $L$-smooth monotone DR-submodular functions that are strongly DR-submodular and have a bounded curvature, and we show how to exploit such additional structure to obtain faster algorithms with stronger guarantees for the maximization problem. We propose a new algorithm that matches the provably optimal $1-\frac{c}{e}$ approximation ratio after only $\lceil\frac{L}{\mu}\rceil$ iterations, where $c\in[0,1]$ and $\mu\geq 0$ are the curvature and the strong DR-submodularity parameter. Furthermore, we study the Projected Gradient Ascent (PGA) method for this problem, and provide a refined analysis of the algorithm with an improved $\frac{1}{1+c}$ approximation ratio (compared to $\frac{1}{2}$ in prior works) and a linear convergence rate. Experimental results illustrate and validate the efficiency and effectiveness of our proposed algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge