Fast Wavelet-Based Visual Classification

Paper and Code

Jun 08, 2008

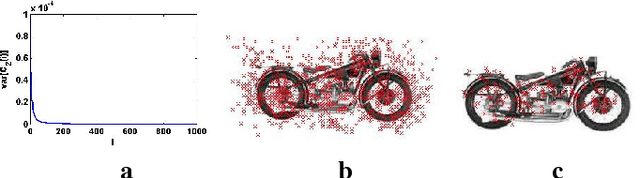

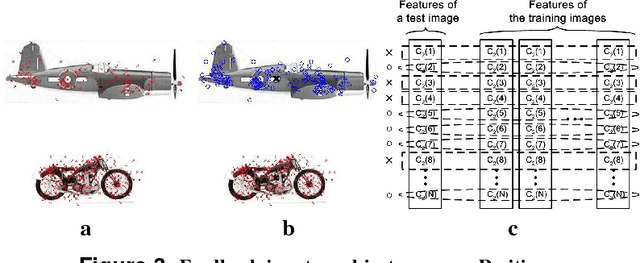

We investigate a biologically motivated approach to fast visual classification, directly inspired by the recent work of Serre et al. Specifically, trading-off biological accuracy for computational efficiency, we explore using wavelet and grouplet-like transforms to parallel the tuning of visual cortex V1 and V2 cells, alternated with max operations to achieve scale and translation invariance. A feature selection procedure is applied during learning to accelerate recognition. We introduce a simple attention-like feedback mechanism, significantly improving recognition and robustness in multiple-object scenes. In experiments, the proposed algorithm achieves or exceeds state-of-the-art success rate on object recognition, texture and satellite image classification, language identification and sound classification.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge